If AI is writing for your brand, you already know the upside: speed, scale, fresh ideas. I pay equal attention to the quieter risk: when a model invents facts, it chips away at trust and can poison your pipeline with low-quality leads. The fix is not magic. I use a practical control stack that narrows what the model can say, feeds it the right evidence, checks its work, and assigns ownership for what goes live. Scope note: this playbook focuses on factual reliability; you still need complementary controls for safety, privacy, and bias.

AI hallucination mitigation strategies

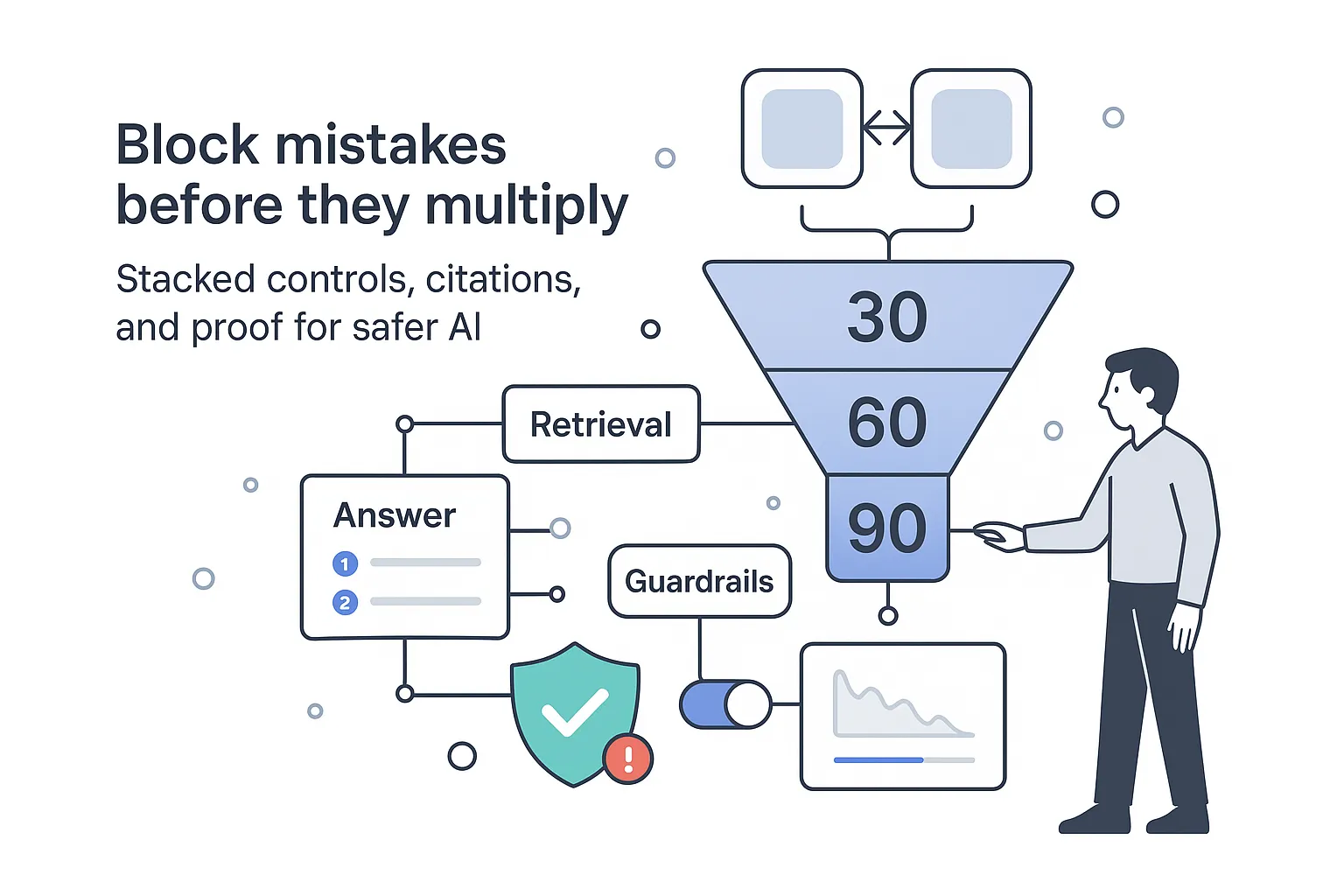

Here is a quick executive summary for busy B2B leaders. In the LLM context, hallucinations are confident but fabricated claims. They damage brand credibility, inflate rework time, and create compliance exposure. The fastest path to risk reduction in production is to pair guardrails with proof. Constrain scope, ground answers in your sources, run factuality checks for AI-generated text, and add content accuracy safeguards for LLMs at publish time. In my experience, a layered approach delivers measurable improvements within 30 to 90 days, depending on baseline and domain. Targets below are illustrative starting points - calibrate to your risk profile and workload.

30/60/90-day rollout

- 30 days: Publish standards and guardrails. Define risk tiers and refusal rules. Turn down temperature. Require citations for mid- and high-risk content. Stand up a simple verification queue with clear sign-offs and logging. Directional aim: a material drop in obvious errors (often 30-40% from baseline) with minimal engineering.

- 60 days: Add retrieval to ground answers in your own docs. Track retrieval hit rate and citation coverage. Introduce automated factual checks for numbers and names. Set an initial target of less than 1% hallucination rate on mid-tier content and at least 90% sentence coverage with valid sources.

- 90 days: Layer in second-model checks and human sampling on high-risk work. Tie metrics to business outcomes like rework hours recovered, fewer client escalations, and faster publish velocity. Maintain 0.5% or lower hallucination rate for high-risk pieces with full citation coverage.

Cost and risk tradeoffs

- Quick wins like safer prompts and lower temperature are inexpensive and cut errors fast, but they do not fix missing context.

- Retrieval and grounding reduce risk the most, especially for up-to-date facts, but need some data engineering and governance.

- Verifiers and human review raise quality and auditability, yet add runtime and staffing cost. Use risk tiers so you pay the extra cost only where it matters.

KPIs to watch from day one

- Hallucination rate per 1,000 words

- Citation coverage per piece and per sentence

- Retrieval hit rate and answerability rate

- Issue MTTR and rework hours per piece

- Publish velocity and escalation count

Risk management for AI content production

I treat AI content like any other production system and set a lightweight governance model that fits the level of risk. Where relevant, I align safeguards with recognized frameworks (for example, NIST AI RMF 1.0 and ISO/IEC 42001) so policies are defensible.

Risk tiers and minimum safeguards

- Low risk: Internal drafts, brainstorming notes, internal Slack messages.

- Safeguards: Safer prompts, low temperature, no external publishing, label as draft.

- Ownership: Requester owns content, no formal review.

- Medium risk: Blog posts, SEO pages, sales one-pagers.

- Safeguards: Retrieval turned on, per-claim citations, automated factual checks, single editor sign-off.

- Ownership: Content lead approves. Acceptance threshold is tight but not perfect.

- High risk: Regulated topics, pricing sheets, client deliverables, legal or medical-adjacent content.

- Safeguards: Retrieval, sentence-level grounding, dual-model verification, human-in-the-loop validation for AI content, final legal or SME approval, full audit log.

- Ownership: SME or compliance approves. Zero tolerance for unsupported claims.

Acceptance thresholds and escalation

- Mid-tier acceptance: At most 1 unsupported claim per 1,000 words, at least 90% sentence coverage by citations, no broken links.

- High-tier acceptance: 100% citation coverage for factual statements, zero unsupported claims, numeric checks pass.

- Escalation path: If automated checks fail, route to human review. If the reviewer cannot find support, route to SME or legal. Log failure reasons and fixes for monthly QA.

Simple RACI and audit logging

- Requester: opens job and supplies sources.

- Builder: runs generation and initial checks.

- Reviewer: signs off on mid-tier work.

- Approver: SME or legal for high-tier work.

- Audit: store prompts, model version, data snapshot, citations, and check results for each publish.

KPIs and targets

- Hallucination rate: Unsupported factual claims per 1,000 words

- Target mid-tier: 1 or fewer

- Target high-tier: 0

- Citation coverage: Percent of sentences with valid supporting source

- Target mid-tier: 90% or higher

- Target high-tier: 100%

- Retrieval hit rate: Queries that return at least one relevant passage

- Target mid-tier: 85% or higher

- Target high-tier: 95% or higher

- Issue MTTR: Mean time to resolve flagged issues

- Target mid-tier: under 24 hours

- Target high-tier: under 12 hours

Strategy 1: reduce hallucinations in generative AI content

I start with quick wins that cut errors before touching the data stack.

- Constrain the domain and answer scope. Tell the model exactly what topics and timeframes are allowed.

- Lower temperature and top-p for factual tasks. This trims creativity that drifts into fiction.

- Set refusal rules. If information is not in provided sources, the model must reply with Not available.

- Limit output to schemas. Use JSON or a strict outline with specific fields to keep the model inside the rails.

- Require unknown handling. Make it easy and acceptable for the model to say I do not know.

Before and after example

- Before: A service page FAQ claims, Our clients see a 37 percent reduction in acquisition costs in 60 days, with no source. That number is made up. It sounds great. It sinks trust.

- After: With constraints, retrieval on, and refusal rules, the answer reads, In the last three case studies, acquisition costs fell by 12 to 18 percent over two quarters. See case study IDs CS-104, CS-117, and CS-132. If no verified data is found, it shows Not available.

I make factuality checks for AI-generated text a repeated step, not a one-off cleanup. When I equip editors to spot a fake number in seconds because the system highlights it and shows its source, review time shrinks and publish confidence rises.

Strategy 2: prompt engineering to prevent hallucinations

Prompts are policy. I set them up like a contract the model must obey.

Reusable template

- Role: You are a careful B2B analyst that only uses provided sources for facts.

- Task: Produce a 600-word answer to the user’s question, focused on [industry] buyers.

- Audience: CEOs and founders at service firms with $50k to $150k monthly revenue.

- Constraints: No invented claims. If a fact is not in sources, write Not available. Prefer plain language.

- Source-citation requirement: Cite a source after each factual sentence using [doc_id:page or URL and span].

- Refusal clause: If the question cannot be answered from sources, state the gap and ask for more context.

- Style guide: Short sentences, clear structure, no fluff, no hype words.

- Clarifying questions: Ask up to three clarifying questions before writing if the request is ambiguous.

Few-shot examples

- Gold example

- Q: What ROI can a client expect from our analytics onboarding in quarter one?

- A: Based on [CS-117:pp3-5], average onboarding cut reporting time by 28 percent within 90 days. [CS-132:pp2-4] shows a 14 percent lift in lead response times. If a client lacks a data lake, the range shrinks to 10 to 15 percent. Not available for clients without baseline time logs.

- Negative example

- A: Clients usually double revenue in a month. Source: internal knowledge. This violates constraints, invents a claim, and lacks a citation.

Operational notes

- Separate system vs user prompts. Pin non-negotiables in the system message so they cannot be overridden by a user request.

- Keep instructions at the top of every turn. Models forget. Your guardrails should not.

- Refresh few-shot examples with current, verified cases so the model learns the right shape of answers.

Strategy 3: retrieval augmented generation for reliability

Ground the model in your own knowledge base so it stops guessing.

Applied pipeline

- Prepare content. Clean duplicates and outdated files. Chunk documents semantically into 200 to 400 token passages. Keep titles and breadcrumbs for context.

- Embed and index. Use a high-quality embedding model. Build separate indexes per client or domain to avoid cross-contamination.

- Retrieve top-k. Pull the most relevant chunks. Start with k between 4 and 10, then tune.

- Rerank results. Apply a cross-encoder or similar reranker to push the best evidence to the top.

- Generate with citations. Pass the retrieved passages and your strict prompt to the generator. Require per-sentence citations.

- Answerability gating. If retrieval confidence or coverage is low, do not answer. Ask for more info or route to human review.

Freshness and access

- Add recency filters so new docs win when dates matter.

- Respect access control lists so private client data stays private.

- Deduplicate near-identical passages so the model does not overfit to repeats.

Retrieval KPIs

- Source recall and precision at k. You want the right passages in the set the model sees.

- Coverage. Percent of answer sentences with a supporting passage attached.

- Answerability. Percent of queries that pass your gating rules.

Strategy 4: grounding AI outputs with sources

Make grounding a rule, not a wish. Every claim gets a source, and every source can be traced.

Citation mechanics

- Per-claim citations at sentence level. Readers should see which sentence ties to which passage.

- Structured citation fields. Store document ID, URL or file path, and span offsets or quoted snippet.

- Automated link checks. Validate that URLs return 200 status. Flag 404s and block publish until fixed.

- Similarity threshold. Compare the quote or paraphrase to the source passage and set a minimum similarity score before publish.

Coverage goals

- Mid-tier content: Target at least 90% of sentences grounded, excluding opinions and transitions.

- High-tier content: Require full coverage for all factual sentences. No exceptions.

Style guide for display

- Blogs: Show inline bracketed citations like [CS-104] with a reference list at the end.

- Reports: Add footnotes with doc title, date, and a short quote so reviewers can spot-check fast.

- Web pages: Use hover tooltips for quick previews of sources without leaving the page.

This is the policy language I rely on for content accuracy safeguards for LLMs. It is clear, checkable, and easy to audit.

Strategy 5: LLM output verification workflows

I act like an editor with superpowers: build checks that machines do well, then keep humans in the loop where judgment matters.

Layered verifier

- Programmatic checks

- Schema validation. Ensure fields are present and within length limits.

- Numeric recompute. Recalculate percentages and totals from cited numbers.

- Date and range rules. Catch dates outside allowed windows or improbable ranges.

- Entity patterns. Use regex to check names, emails, stock tickers, phone numbers.

- Model-based checks

- Contradiction and consistency. A second model flags claims that conflict with the retrieved evidence or with earlier statements in the same piece.

- Retrieval back-check. For each claim, find support in the source set. If missing, downgrade confidence or block publish.

- Self-consistency and cross-model agreement. Sample multiple generations and require agreement within a margin. If models disagree, send to manual review.

- Human-in-the-loop validation for AI content

- Sampling plan. Review every high-risk piece and a 10 to 20 percent sample of mid-tier content.

- Triage thresholds. Auto-publish only if schema, numeric, and citation checks pass and sentence coverage meets targets. Otherwise hold for review.

- Logging. Record failure reasons like math mismatch, missing citation, outdated source, or scope violation. Use this for monthly tuning.

Operational rhythm

- Weekly review of logs to spot recurring failure modes and tweak prompts or retrieval.

- Monthly quality report to leadership showing hallucination rate, coverage, MTTR, and impact on publish velocity.

- Quarterly refresh of sources and few-shot examples.

Conclusion

Here is the operating model I use to keep quality high without slowing you down. Govern by risk tier so you do not treat a blog post and a client deliverable the same. Ground answers with retrieval so the model stops guessing. Constrain the model with strong prompts and strict schemas so it stays on the rails. Verify with automated checks first, then layer in humans where the stakes are high. Measure with clear KPIs that map to time saved and fewer escalations.

A simple phased rollout works well

- Week 1: Publish policies, templates, and refusal rules. Add safer defaults for temperature and top-p. Set up logging.

- Weeks 2 to 3: Stand up retrieval on your top content sets. Require sentence-level citations on mid- and high-risk work. Track retrieval hit rate and coverage.

- Weeks 4 to 6: Add programmatic and model-based verifiers. Define triage thresholds for auto-publish. Bring SMEs into the high-risk review queue with clear ownership.

- Ongoing: Sample content each week. Tune prompts and chunking. Retire stale sources. Hold a monthly quality standup with a short report.

Tie metrics to business impact

- Reduced rework time. Fewer editing cycles per piece and faster time to publish.

- Fewer client escalations. Lower escalation rate and faster resolution when issues do arise.

- Better pipeline quality. Less noisy inbound from pages with clear, accurate claims.

You do not need a moonshot to cut risk. You need simple, enforceable controls that work together. Start small, measure, and build the habits that keep your brand credible at scale.

.svg)