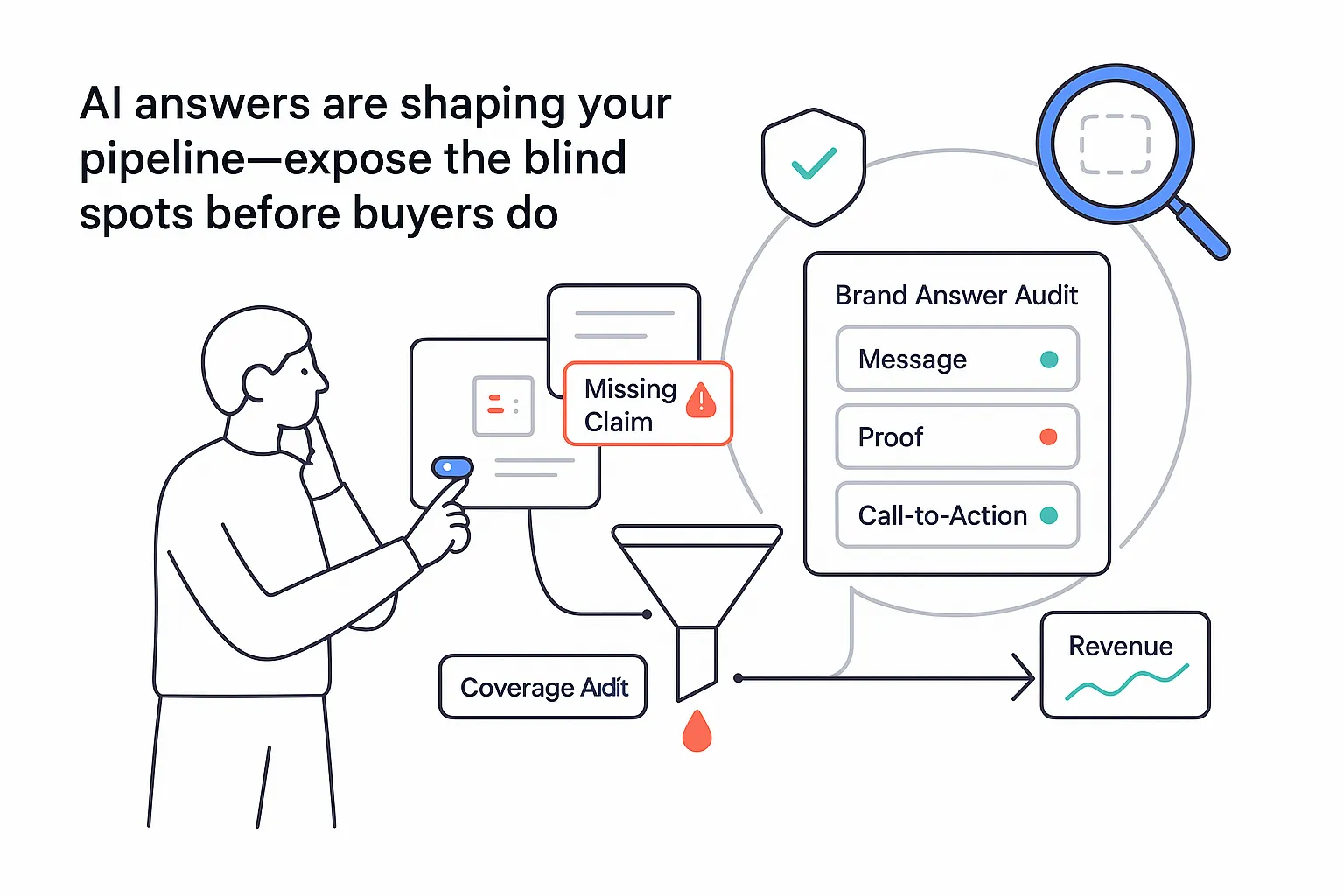

If prospects ask an AI about your company, I want the answer they see to be right, timely, and helpful - not almost right, not a guess. CEOs and marketing leads keep telling me the same thing: they do not need more dashboards; they need one source of truth that shows where the brand shows up in AI answers, what was said, and whether it helped or hurt. That is the heartbeat of modern visibility: get the facts fast, link them to revenue, and cut the noise.

Monitor brand mentions in AI answers | Recommendations:

I start by picturing a single view that pulls your brand’s presence from ChatGPT, Gemini, Perplexity, Claude, Mistral, DeepSeek, and Google AI Overviews. In that view, I can see which questions trigger mentions, how you are cited, and whether the context helps your pipeline. I also see the misses - the queries where you should appear but do not. With that setup, you can monitor brand mentions without chasing screenshots or secondhand stories from sales calls. Learn more about Google AI Overviews Tracking.

To make the view useful at a glance, I stack a simple KPI bar across the top. Keep it boring on purpose, because boring is readable.

- Share of voice in AI answers by model and topic (percent of sampled answers that include your brand). Monitor your brand’s share of voice.

- Context mix by sentiment: positive, neutral, negative (use a calibrated scoring method and periodic human review)

- Misinformation rate with a plain-language reason code (e.g., outdated pricing, wrong attribution, incorrect capability)

- Time to detection and time to correction (median hours)

- Top queries driving mentions and the pages cited

A quick practical start looks like:

- Import brand names, common synonyms, product lines, known misspellings, and a short competitor set. Add allow and deny lists if you already have them in policy docs.

- Pick target AI platforms and regions. Include both logged-in and logged-out states where possible. If local intent matters, pair this with local rank tracking.

- Set alert thresholds for misinformation, sentiment shifts, and competitor overtakes, and document the definitions so reviewers score issues the same way.

Yes, it looks like many other dashboards at first glance. The difference is what you do next. I feed the insights to content, PR, and RevOps, then connect them to meetings booked and deals created. That is where visibility becomes ROI, not just a report. If you also report to clients, consider white-label SEO dashboards so the story stays clear and consistent.

Detect brand names in chatbot responses | Recommendations:

Getting detection right starts with the stack. I use named entity recognition for brand mentions to find explicit references, then add fuzzy matching to catch misspellings and casual phrasing. I canonicalize every hit back to a single brand record so Variant A and typo C roll up to the same entity. I pair that with allow and deny lists for tricky terms and partner brands.

Now layer platform nuance. ChatGPT and Gemini can show different answers based on login state, geo, device, and prompt history. Perplexity and Claude are sensitive to phrasing and citation windows. Mistral and DeepSeek may rotate sources more often. I plan test prompts for each engine and lock down variables. I rotate prompts on a schedule to reduce bias from repetition, but keep a control group so I can compare week to week.

Capture full context for every run:

- Full answer text

- Citations and outbound links

- Screenshot for legal and creative review

- Timestamp, region, device type, and whether the user was logged in

- Model version or release label if available

- Confidence score from your detector, not the model

Disambiguation matters. If your brand is also a common noun, I build term-plus-context rules. For example, require co-occurrence with a product, a founder name, or a city. I score those rules, then only escalate high-confidence hits. This reduces noise, which reduces team fatigue, which keeps the system in use. I also spot-check false positives and false negatives on a labeled sample so the model improves over time.

I consolidate all platforms in one workflow. You can still name-check ChatGPT, Gemini, Perplexity, DeepSeek, Mistral, Claude, and Google AI Overviews in reporting, but run a single detection pipeline so your metrics match across models.

Real-time alerts for brand mentions in AI | Recommendations:

Speed matters when a wrong claim starts to spread. I set real-time alerts for brand mentions in AI with clear categories that map to action.

Alert types that actually help:

- First-seen mention on a tracked query

- Sentiment flip from positive or neutral to negative

- Misinformation detected with reason code and example lines

- Competitor overshadowing where your brand drops below theirs in the same answer

- New citation source that sent traffic or got repeated by another model

Ship alerts through Slack, Microsoft Teams, email, and webhooks. Add deduplication so the same event does not ping five channels. Respect quiet hours for global teams. Use severity levels so executives get daily digests while operators get immediate pings for high-risk items. One click should create a Jira ticket or PagerDuty incident when PR, legal, or compliance needs to be looped in. Track time to acknowledgment and time to resolution so you can tune the thresholds and routing.

This is not about micromanagement. It is about fewer surprises, addressed faster, with less thrash.

Generative AI brand monitoring | Recommendations:

I treat this as always-on measurement across prompts, personas, and moments. Prospects ask many variations: best service provider near me, top agencies for B2B, which company fixes this specific problem. Your system should sample both head terms and long questions, then chart how your brand appears over time. To expand your input list, Conduct keyword research.

Show trendlines across models, topics, geos, and time. Break out share of voice versus your core competitors. List the top queries producing mentions and the pages being cited. Include a confidence interval, because model volatility is real. When updates roll out, trendlines wobble. That is not failure. That is signal. Note seasonality and release cycles so you can separate model churn from market shifts. If you also track classic rankings, compare against your broader footprint with See your search visibility score and core rank tracker features.

Tie the loop to revenue. Track referral clicks from AI citations, even if they are indirect. Attribute assisted influence when a prospect viewed an answer and later visited your site. Send privacy-safe events to your CRM so marketing and sales leaders see the line from AI visibility to pipeline. Expect the attribution to be directional; the goal is decision support, not courtroom proof. If Google is a priority channel, see tactics on How to rank in Google AI overviews.

Track brand references in LLM outputs | Recommendations:

Group your monitored questions into clusters that mirror intent: how-to, comparison, best-of, pricing, troubleshooting, and executive summaries. For each cluster, measure hit rate, the rank position of your mention in multi-answer responses, and citation quality. A link to a stale page is not the same as a quote from your latest report. Score that difference.

Segment coverage depth by category. For how-to queries, track whether your product pages or docs get cited. For comparison queries, check if you appear near the top and whether the text is balanced or skewed. For pricing, scan for outdated numbers and missing legal language. For best-of lists, monitor whether your brand appears and how often others edge you out.

Set a testing cadence that matches your business rhythm. Daily checks for high-volume queries, weekly for niche segments, monthly for thought leadership. Rotate prompt templates to avoid overfitting to a single phrasing. Keep a seed set stable for trend tracking and a rotating set for discovery. This keeps data honest while you experiment.

Brand safety for AI-generated content | Recommendations:

AI can get creative in ways that make legal teams sweat. Risks include harmful associations, outdated claims, wrong pricing, unauthorized partnerships, and compliance breaches. One bad answer can spread quickly, especially during product launches or conference weeks when search interest spikes. That is why brand safety for AI-generated content needs its own policy library and workflow.

I start with a policy library that maps each rule to a market or industry. Examples help:

- No claims of guaranteed outcomes in regulated categories

- No mention of partnerships unless the legal team has an approved list

- Pricing must match the current pricing page within a small tolerance

- Any medical or financial advice must carry the required disclaimer for that market

- Sensitive topics flagged for review by PR before publication

Use AI content moderation for brand references as the hands-on layer that applies these rules. Run checks before generation where you control the prompt, and after generation when scanning live answers. If a rule is triggered, take a policy action: block unsafe content, rewrite the sentence, add a disclaimer, or escalate to a reviewer. Always keep an audit trail that ties the action to the rule. A human-in-the-loop step on high-risk items keeps the process defensible.

A short note on culture. Policies should limit risk without silencing useful content. Too much blocking frustrates teams and sends work around the process. Clear examples and quick paths to fix issues keep the system moving.

Compliance monitoring of AI responses | Recommendations:

Regulated teams face extra weight. Health brands must respect HIPAA rules and avoid mishandling PHI. Financial services watch for FINRA and SEC boundaries. Any company touching personal data must consider GDPR and CCPA guidelines. Your monitoring should check for banned claims, required disclaimers, and the presence of sensitive data in AI answers.

Build immutable audit logs. Every flagged answer should carry a timestamp, the exact text, the model version, and the rule that fired. Add reviewer notes, links to the final fix, and keep an exportable evidence pack. When an auditor asks for proof, you can hand them a clear timeline with attachments.

Run proactive test prompts for high-risk categories on a schedule. Check them weekly or monthly depending on your industry. Issue a periodic compliance report to leadership that shows total checks, breach rate, time to resolution, and what improved. This builds trust fast and gives legal something concrete to review and refine.

Guardrails to prevent unauthorized brand mentions | Recommendations:

Not every mention should go live. Build guardrails to prevent unauthorized brand mentions across both API calls and on-prem models. Start with allow and deny lists tied to policies. Add real-time filters that catch disallowed terms and trigger actions. Actions can include block, rewrite, add a disclaimer, or escalate to a human.

Expect edge cases. Over-blocking happens when rules are too tight. Model drift happens when vendors ship updates that change answer styles. Vendor updates can break a test that passed yesterday. Plan for continuous evaluation with canary tests before full rollout. Keep rollback options ready so you can revert to a safer policy set when needed.

Wrap this with governance. Use approval workflows for new rules, version policies with change logs, and record who approved what. Assign clear ownership so there is no finger pointing if something slips. When everyone can see the rule set and the history, teams move faster with less worry.

A last thought from me for the busy CEO. You want fewer surprises and more signal. AI search and chat answers are becoming a first impression for many buyers, especially during big buying seasons or end-of-quarter research sprints. When you can see your brand’s presence across models, correct errors quickly, and tie that work to revenue, you gain control. Not perfect control. Enough control to make better bets, faster, with confidence that the story people read about your brand is the story you intended.

.svg)