AI is already rewriting the top of Google results, and that has real pipeline impact. I can measure when AI Overviews appear, whether my brand is cited, how stable those results are, and how that changes clicks. I track it, tag it, trend it, then tie it back to leads. That is the play.

AI snapshots SERP tracking

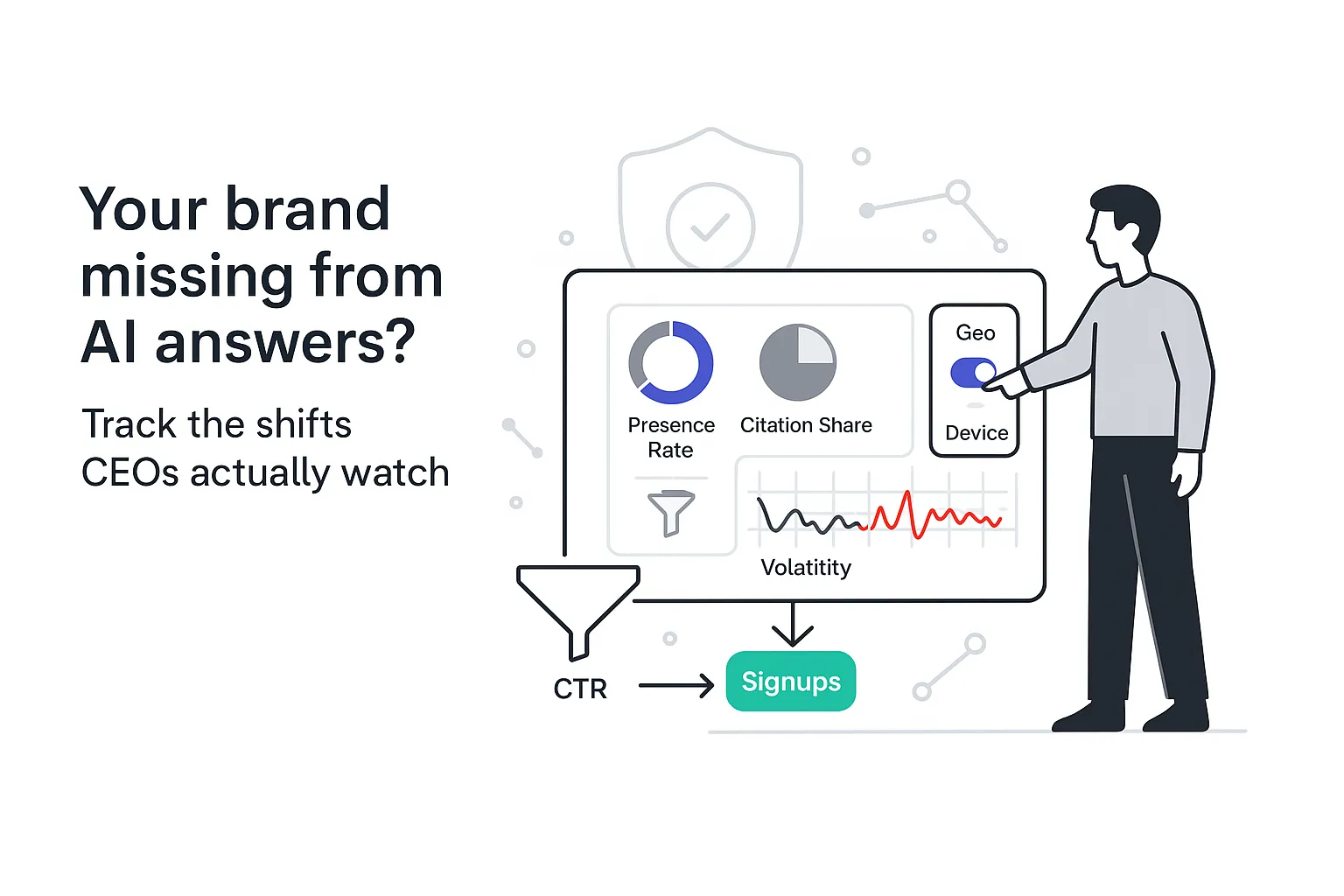

What can I measure and why does it matter? Short answer: I can quantify presence, visibility, and movement inside AI Overviews, then connect it to CTR and signups. If a buyer sees an AI snapshot first, my brand either appears or it vanishes. That visibility gap affects who gets the next meeting.

Quick hits CEOs care about when I brief them:

- Presence rate: percent of tracked queries showing an AI Overview

- Share of voice: my citation share versus others inside the snapshot

- Citation wins and losses: net change by domain and page

- Volatility: day-to-day churn of citations and layout

- Competitor movement: who entered, who dropped, and where

Hero visual, explained in plain English:

[AI Overview on desktop - annotated]

1) Summary box: Concise AI-generated answer at the top

2) Initial citations: [yourdomain.com] [industryguide.org] [vendor.edu]

3) Follow-up chips: Pricing • Pros and cons • Alternatives • Implementation

4) Generate / Show more: Expands to reveal more citations and details

5) Organic listings below: Traditional blue links pushed down the page

Two reactions can both be true. AI Overviews feel unpredictable. Yet I can track them in consistent ways that help me forecast leads and protect my brand’s visibility.

Rank tracking for AI snapshots

I set up tracking in four steps so the data actually drives decisions.

-

Choose keyword sets by funnel stage

- Top: problem statements and category terms

- Mid: comparisons, features, solution types

- Bottom: service keywords, pricing, near-purchase terms

-

Capture AI Overview states daily

- Store HTML or screenshots of the snapshot element

- Save layout states and follow-up chips

- Daily capture, weekly rollups: I still record daily because volatility hides inside weekly averages

-

Tag by geo and device

- US, UK, CA, AU at a minimum if I sell across regions

- Track desktop and mobile as separate segments

-

Set alerts

- Daily ping when presence rate or citations shift beyond a threshold

- Weekly summary of gains and losses by domain

If I use Advanced Web Ranking, I add Google Desktop and Mobile Universal engines in Project Settings > Search Engines and review the monitoring preferences explained here. I then filter keywords that trigger AI Overviews from Ranking > Keywords to keep my tracking set focused.

I align on KPIs I can take to the leadership table:

- Presence rate for AI Overviews: percent of tracked queries triggering the snapshot

- Citation count by brand and domain: how often my site appears across all tracked queries

- Top-of-snapshot citation share: share of initial citations that include my brand

- CTR lift proxy: compare Search Console CTR on days a page is cited versus not cited for the same query, device, and geo

- Leads from cited pages: tie page-level signups to periods when those pages are cited, then compare against periods when they are not

What a tracker must capture for this to work:

- SGE presence detection per query and date (including whether the snapshot is auto-shown or requires Generate)

- Citation extraction (domain, URL, and position in initial vs expanded view)

- Device and geo support (desktop and mobile, segmented by region)

- Historical snapshots (daily HTML or image storage to analyze volatility)

- Export or API access for joining with Search Console and analytics

A note on KPIs for busy quarters. During Q4 planning or right after a core update, I keep the list tight: presence rate, top citation share, and lead signups tied to cited pages. I widen the dashboard later.

Need quick checks outside a full tracker? I use SERP checkers or a free Keyword Rank Checker to spot-test where AI Overviews appear and how rankings shift.

Google AI Overviews tracking

AI Overviews (previously surfaced via SGE) assemble a short answer and cite pages that support that answer. I may see a visible box right after ads, a Generate or Show more action, and follow-up chips that lead to expanded content. It varies by query, user, device, and region. That variability is why daily tracking matters.

What tracking actually captures:

- Presence: does an AI Overview appear for that query on that device (auto vs on-demand Generate)

- Layout: initial summary only or expanded state, plus the chip set

- Citations: domains and URLs in the initial view and expanded section

- Follow-up chips: text labels and their order, since chips drive secondary clicks

Before and after example:

Before: No AI Overview

1) Ads

2) Top organic result

3) People Also Ask

4) Additional organic results

After: AI Overview present

1) Ads

2) AI Overview with 3 initial citations and chips

3) Organic results pushed below the fold

Why this matters for B2B: a query like "SOC 2 process," "managed IT pricing," or "cloud migration phases" might now trigger an AI snapshot. If my page is cited, I gain trust and often clicks. If it is not, my organic result might still rank, yet see lower CTR. That gap can ripple through pipeline forecasts in a very real way. Call it pragmatic, not hype: Google AI Overviews tracking gives me early warning when the playing field moves.

I make room for SERP feature monitoring for AI Overviews alongside classic elements like People Also Ask, video packs, and local units. The full picture shows where attention flows on the page. For foundations on rank tracking, I point teammates to a primer on straightforward rank tracking and a quick guide to quickly check if a ranking has shifted. For broader tool context, comparisons of rank-tracking and SERP-checking solutions and lists of the best SEO tools help with vendor selection.

SGE visibility tracking on desktop vs mobile

Desktop and mobile show the same idea with different weight.

Desktop patterns to watch:

- Wider summary card and a bit more breathing room

- Three initial citations visible most of the time

- Chips often sit right under the summary and are easy to scan

Mobile patterns to watch:

- The snapshot can push organic results far below the fold

- Scroller length matters and chips are more prominent

- Show more expands to a longer panel with more citations

Annotated view

Desktop

[Summary] [Citations: 3] [Chips inline]

Organic results visible right below

Mobile

[Summary]

[Chips stacked and tappable]

[Initial citations]

[Show more] expands panel

Organic results appear after a long scroll

Advice that saves time: I create separate projects for desktop and mobile, tag everything by device, and isolate CTR impact by segment. I often see higher mobile churn in service categories where questions keep changing. That is where snapshot volatility tracking earns its keep, because small mobile shifts can swing CTR in or out of the margin promised to a board. For SGE visibility tracking, I keep both devices in the weekly readout, not just desktop out of habit.

Method note on volatility: I like a simple metric - 1 minus the Jaccard similarity of the citation sets day over day. It turns churn into a trend line leaders can understand.

Monitor AI Overviews appearance by query

Not every query triggers an AI snapshot. I segment keywords by intent and commercial value, then measure appearance rate weekly by cluster.

A simple segmentation I can set up fast:

- Problem awareness cluster: pain points and early research

- Solution research cluster: how it works, comparisons, integrations

- Purchase cluster: pricing, timeline, service type, demo intent

Priority rule of thumb: I focus first on clusters with more than a 40 percent appearance rate. Those clusters see the biggest visibility shifts.

Export schema I hand to an analyst:

- keyword

- cluster

- device

- date

- appears (Y or N)

- follow_up_chips (list)

- citations (list of domain and URL)

- notes

Mini playbook for new queries and Generate prompts:

- Add 10 to 20 new queries per week from sales calls or chat transcripts

- Watch for Generate prompts where the snapshot is not auto-shown

- If prompting unlocks a snapshot reliably, keep tracking it and tag it as prompted

- Note which chips surface buyer questions my content does not cover yet

- Ship quick content updates when chips change in ways that match my ICP

When I monitor AI Overviews appearance by query this way, my content roadmap stops being guesswork. It becomes weekly signal linked to revenue.

AI Overviews share of voice

Share of voice inside AI Overviews answers a simple question: across tracked queries, what portion of citations belongs to my brand versus others, weighted by search volume and business value.

How I calculate it without overthinking it:

- For each query, award points to cited domains

- Weight those points by monthly search volume and an internal value score

- Weight initial citations higher than expanded-view citations (they are seen more)

- Sum by brand across all queries and devices

- Divide by the total points to get AI Overviews share of voice

What I show on a dashboard:

- Stacked bars by brand, by cluster

- Time-series lines showing weekly movement

- A weekly table of winners and losers with net citation change

I fold in competitor presence in AI Overviews here. I track entries, exits, and depth of citations for the same domains faced in sales. Two alerting rules keep the team focused:

- SoV drop alert: week-over-week drop greater than 20 percent in any revenue cluster

- Surge alert: any competitor with a 30 percent week-over-week jump in a cluster I sell into

The outcome is simple. I see risk and opportunity faster, and content spend gets pointed where the AI box decides attention.

AI snapshot citation tracking

Citations make or break visibility inside the snapshot. If I track them cleanly, the content plan gets clearer.

I collect and normalize citations with five fields:

- Domain and URL

- Content type: blog, docs, case study, review, landing page

- Position: initial view versus expanded

- Recurrence: count per query and across queries

- Date seen and device

I map every citation back to site structure:

- Which services pages earn citations

- Which thought leadership pages land in initial view

- Which pages never cite well and need upgrades

I maintain a sources leaderboard that highlights the domains AI prefers for my topics. Then I build a content backlog from patterns I see. If case studies win citations for peers, I add more proof pages. If docs keep surfacing, I ship clear, well-sourced explanations that match chip language.

I also keep SERP feature monitoring for AI Overviews in context with the rest of the page. A page might lose the AI citation one week yet gain a People Also Ask answer or a featured snippet the next. All of it affects clicks.

How my website can get featured in AI Overviews

I cannot force my way into the snapshot, yet I can make it much more likely. I follow a clear set of criteria that favors real buyers and helpful pages.

- Cover the full intent on a single, scannable page

Include a short summary, an explainer, a simple list, and a short FAQ. Match common chip labels in subheads. - Write concise answers in natural language

Short paragraphs and plain words. Use question-based subheads for common follow-ups. - Strengthen E-E-A-T with specifics

Add author credentials, link to primary sources, include first-party data or examples from my customer base. - Keep content fresh

Update dates, refresh stats, and note what changed after major updates or seasonal shifts. - Add schema where it helps

FAQ, HowTo, and Review schema can clarify meaning and context for parsers. - Earn reputable mentions

Quality links and mentions from trusted sources still matter. So do citations in industry reports and conference decks. - Improve page experience

Fast load, clean layout, readable on mobile. Avoid noisy pop-ups where the answer should be.

Quick wins worth shipping this month:

- Add a one-paragraph summary at the top that mirrors how buyers phrase the query

- Cite authoritative sources and show them in context

- Build out topical clusters so my page is backed by adjacent content that goes deeper

Example page structure that often earns citations:

H1: The service or problem stated in buyer language

Intro: 2 to 3 lines that answer the core question

Section: Short explainer with a numbered list

Section: Pros and cons, or risks and mitigations

Section: Pricing factors and timelines

Mini FAQ: 3 to 4 crisp Q and A items

References: 3 to 5 reputable sources

Schema: FAQ or HowTo where relevant

FAQ

Q: What is an AI snapshot in Google’s SERP and how is it different from a featured snippet

A: An AI snapshot is a summary box that blends information from multiple sources and cites them. A featured snippet is a single excerpt from one page. See Google AI Overviews tracking for layout details.

Q: How often should I track AI Overviews and on which devices or geos

A: Daily is the right cadence for volatile topics, weekly for stable niches. Track both desktop and mobile, and split by US, UK, CA, and AU if you sell across those regions. See SGE visibility tracking on desktop vs mobile.

Q: Do traditional rankings still matter if an AI Overview appears

A: Yes. Organic rankings still drive clicks, yet they sit lower when a snapshot shows. Measure CTR on days with and without snapshots to see the delta. See AI snapshots SERP tracking.

Q: Which tools can reliably track AI Overviews and citations

A: Use a rank tracker that captures the snapshot HTML, logs citations, and stores device-level history. Look for SGE presence detection, citation extraction, and export options. See Rank tracking for AI snapshots.

Q: How do I calculate ROI from AI Overview visibility

A: Tie leads and signups from pages that are cited to the period when they are cited. Compare against content production and tracking costs. The result shows the return from improved visibility. See AI Overviews share of voice.

Q: Why do AI snapshots appear or disappear for the same query

A: Google tests layouts and varies results by intent, geo, and device. Seasonality and updates can also shift which queries trigger snapshots. See Monitor AI Overviews appearance by query.

Q: Can I influence which of my pages get cited

A: I cannot control it directly. I can improve odds with helpful, current content, clear answers, and signals of trust. See How my website can get featured in AI Overviews.

Q: How do I monitor competitor presence inside AI Overviews

A: Track citation entries and exits by domain and watch cluster-level Share of Voice. Alert on surges so content moves can be planned. See AI Overviews share of voice.

Seasonal note

During budget season, decision makers skim dashboards, not docs. I keep the top card simple: presence rate, citation share, and signups from cited pages. That clarity builds trust and, quietly, keeps the pipeline steady.

.svg)