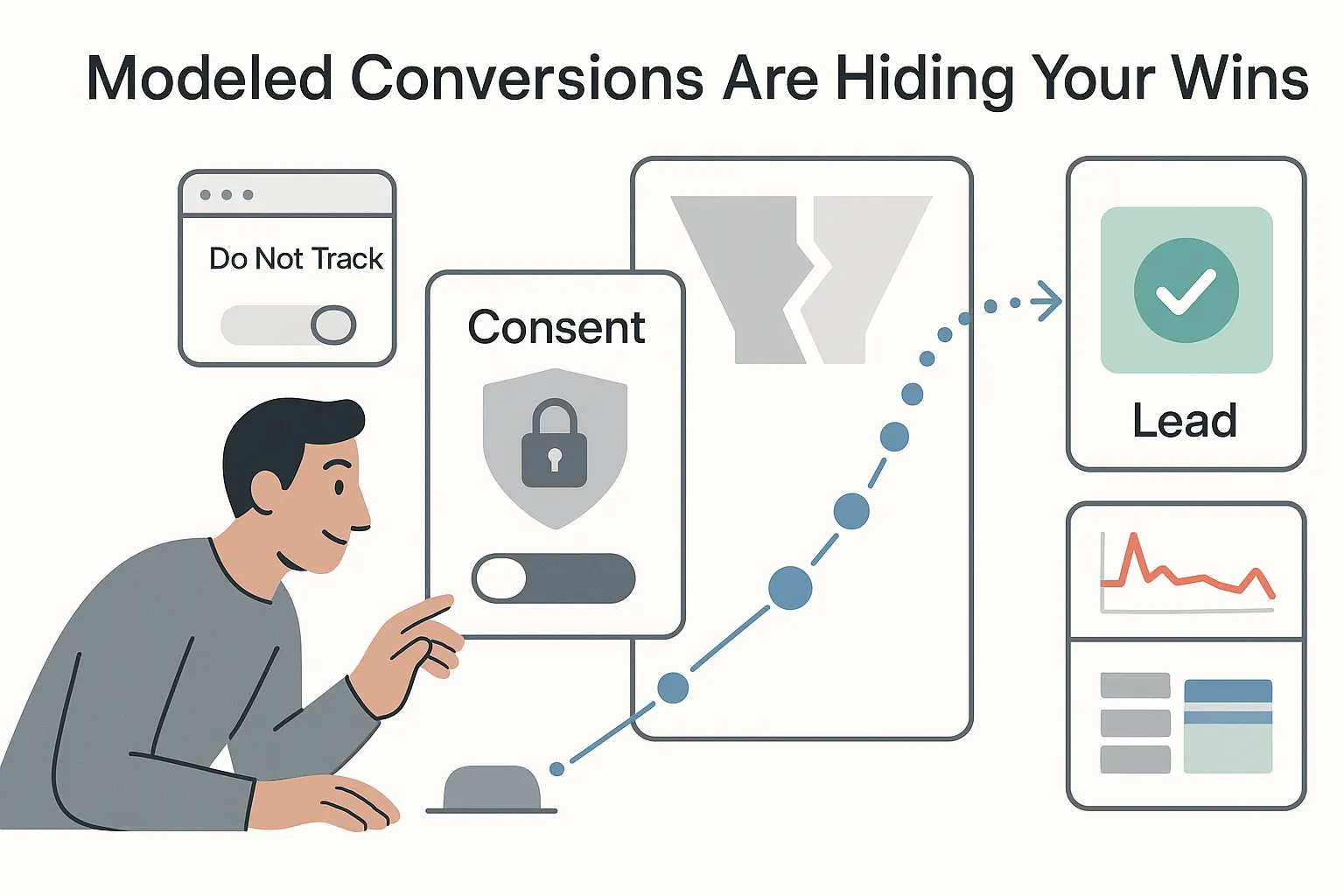

I care about revenue, not vanity stats. Still, when I ship a consent banner or Safari tightens the screws again, my reports wobble. Traffic looks fine. Conversions dip. Cost per lead spikes. That gut punch often isn’t real performance falling. It’s measurement gaps. This is where modeled conversions step in, quietly filling the holes so my B2B pipeline math still adds up.

What are modeled conversions in GA4

If you’re asking what GA4 modeled conversions are, here’s the short version. GA4 modeled conversions estimate conversions I couldn’t observe directly because of consent choices, browser limits, cookie loss, or cross-device journeys. GA4 uses aggregated, privacy-safe signals to recover those missing conversions and folds them into reports so totals reflect both observed and inferred activity. In the GA4 interface, Google now labels conversions as Key events. Most marketers and Google Ads still say conversions, so you’ll see both terms (see About conversions).

When does modeling kick in? Typically when the system sees gaps and has enough reliable data to learn from:

- Consent mode is enabled and sending cookieless pings when consent is denied

- Sufficient tag coverage exists across my site or app

- Minimum volume thresholds are met so the model has signal

- I operate in regions with consent requirements and a share of users decline tracking

- The system detects measurable holes due to things like iOS limits or third-party cookie loss

Privacy matters here. Google has stated it doesn’t identify individual users for this modeling. Google doesn’t allow fingerprint IDs. The models run on aggregated data such as historical conversion rates, device type, time of day, and geo. No fingerprinting, no person-level tracking.

B2B examples that benefit from GA4 modeled conversions:

- Demo request form submissions

- Booked consults or discovery calls

- Proposal or pricing requests

- Chatbot qualified leads

- High-intent bundles such as a deep scroll plus a CTA click that fires a lead event

How GA4 models conversions

At a high level, GA4 trains machine learning models on observed, consented conversion data. It looks at patterns across channel, campaign, device, browser, geo, time, and page context. Then it applies those learned patterns to similar traffic where conversions were not observable, inferring the conversions likely to have happened. As data shifts, the models recalibrate to keep estimates aligned with current behavior. For more background, see Google’s overview on why conversion modeling will be crucial in a world without cookies.

Google has described using holdback validation, comparing predicted results against a slice of withheld observed data, to keep the model honest.

There are guardrails. GA4 needs minimum data thresholds and decent tag coverage. It adapts to regional privacy rules. When confidence is low, modeled numbers are withheld. When modeling is present, many standard reports include a footnote indicating that totals are blended from observed and modeled sources. And again, this is aggregate modeling - not user-level identification.

Why do gaps appear in the first place? A few common culprits:

- Users deny consent to analytics or ads storage

- iOS and Safari limit tracking windows via Apple’s App Tracking Transparency (ATT) policy

- Third-party cookies phase out in major browsers, including Chrome’s Privacy Sandbox timeline

- Ad blockers or privacy-focused browsers cut measurement

GA4 conversion modeling explained

Here’s a simple walkthrough that mirrors how it plays out in practice for me:

- I deploy complete tagging and Consent Mode v2. Conversion events are tracked on forms, chats, and booking flows.

- GA4 collects observed conversions from users who consent and are measurable.

- The machine learning system learns patterns from those observed conversions.

- GA4 infers conversions for similar unobserved traffic where consent was denied or identifiers were missing.

- Results are blended and annotated in reports when modeling is applied.

- The model retrains and revalidates on a rolling basis so estimates don’t drift.

Quick note so you don’t mix terms. Behavioral modeling with consent mode fills gaps in metrics like users and sessions when consent is denied. What I’m covering here is specific to conversions. That distinction matters when I explain changes to a leadership team.

GA4 modeled conversions reporting

Where do I see the numbers? In GA4, modeled values are blended into normal reports once eligible. I look in:

- Reports > Engagement > Conversions/Key events. Footnotes often mention modeling when it’s in play.

- The Advertising workspace. Attribution > Model comparison, Conversion paths, and Performance are core views.

- Explorations for analysis. Footnotes are less visible there, so I check standard reports for annotations to know when modeling is active.

Totals are blended by default - observed plus modeled - because that’s how decisions are made in the real world. Budgets don’t pause because a cookie expired. Also assume that GA4 data-driven attribution (DDA) and modeled conversions are connected. DDA will reflect modeled conversions when assigning value across touchpoints.

Analyst callouts I share with stakeholders:

- Modeling can shift channel splits, especially for Safari and iOS traffic

- New consent banners or CMP changes can increase the modeled share

- Tag fixes can reduce untracked direct and improve attribution clarity

- Expect some stabilization time after any major privacy or tagging change

GA4 modeled conversions setup guide

For B2B lead generation, the right setup shortens the time to trustworthy numbers and keeps finance calm. My sequence:

- I audit tagging with gtag.js or Google Tag Manager across every conversion point: lead forms, call tracking, chat tools, meeting bookings, file downloads, and gated content.

- I define clean event parameters. I pass form name, lead type, and page context, and I mark the right events as conversions (Key events) in GA4.

- I enable Consent Mode v2 through a compliant consent management platform. I map analytics_storage, ad_storage, ad_user_data, and ad_personalization, and I respect region-aware defaults in the EEA and UK.

- If I run paid media, I link Google Ads and confirm Key events are shared and mapped to conversion actions.

- I QA with DebugView and Tag Assistant. I check server responses, consent states, and event names. I verify that denied consent still sends cookieless pings.

- I document baselines and expected lag. Modeling shows up only after thresholds are met; it needs time to learn.

- I avoid common pitfalls: double-tagging the same event, consent states firing in the wrong order, redirect thank-you pages with no tag, iframe forms that never send events, or firewalls blocking measurement.

This approach aligns with Google’s guidance. I’m giving the models the clean signal they need while staying compliant. And just like a sales process, consistency beats heroics.

GA4 consent mode modeled conversions

Consent Mode v2 is the biggest unlock for GA4 consent-mode modeled conversions. There are two broad flavors. Basic implementation pauses tags until consent. Advanced implementation lets tags send cookieless pings when consent is denied. Those pings do not store or read cookies, but they provide enough context for the model to estimate conversions that likely happened.

A few practical tips:

- I use a compliant CMP that passes analytics_storage, ad_storage, ad_user_data, and ad_personalization

- I default to deny in the EEA and UK until the user grants consent

- I map region logic correctly so visitors outside strict regions do not inherit the wrong defaults

- I test with the Consent Debugger in Tag Assistant, confirming state changes, default states, and no accidental consent granted on first paint

Accuracy rises with better consent signaling and broader tag coverage. If modeling looks weak, I start by improving consent state accuracy and filling tag gaps instead of tuning reports.

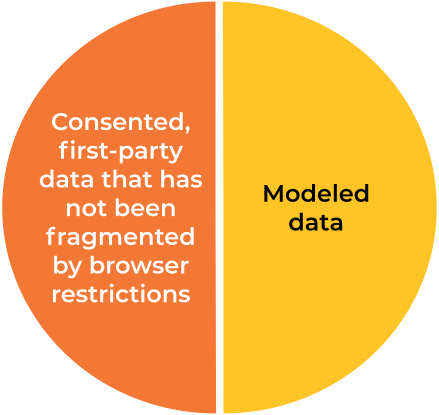

GA4 modeled vs observed conversions

Here’s the distinction. Observed conversions are the events GA4 measured directly with consent and technical coverage. Modeled conversions are the estimated ones, inferred from similar observed patterns when direct measurement is blocked. In most reports, totals are blended. GA4 does not provide a neat toggle that splits them everywhere.

How do I work with this?

- I generally can’t segment modeled vs observed in every GA4 report, so I rely on report annotations to know when modeling is present.

- When I link to Google Ads, the platform can show Consent Mode impact for ads traffic. That view estimates how many conversions were recovered via modeling on ATT impacted traffic.

- I expect larger modeled shares in privacy-strict regions, on Safari and iOS, and on sites with lower consent acceptance.

A quick caution. Turning off modeling to judge performance sounds neat but isn’t helpful. I’d punish channels with higher shares of privacy-conscious users. A better move is to compare performance before and after a banner change or tagging fix. If possible, I run regional or time-bound holdouts in ads platforms to get a sense of lift.

GA4 modeled conversions accuracy

Accuracy depends on signal quality. GA4 isn’t guessing; it’s estimating from patterns in my own data. The cleaner the inputs, the better the outputs. Here’s what moves GA4 modeled conversions accuracy in the right direction:

- Tag completeness across the entire lead journey

- Consent implementation quality and correct defaults by region

- Adequate traffic volume and steady conversion definitions

- Stable channel mix and landing page experience

- Consistent campaign UTMs and naming

- Regular recalibration as my site or pipeline changes

To maintain rigorous reporting, I keep this operating rhythm:

- I enforce clean UTMs and avoid the dreaded not set campaign entries

- I standardize conversion definitions and don’t rename events every month

- I monitor consent rates weekly and watch tagging health in Tag Assistant

- I track CMP and banner UX changes, since they can swing acceptance rates

- Where allowed, I consider server-side tagging to improve signal quality

- I validate reported conversions against CRM lead counts and call logs, investigating gaps that matter - not minor noise

- I communicate methodology notes in reporting so leaders understand why numbers shift

When something changes that could affect modeled shares, I send a quick brief:

- What changed: CMP update, consent wording, new tag route, or a new landing page template

- Why it matters: new defaults in the EEA may push more sessions into modeled territory

- Expected impact: higher or lower modeled share, shifts in channel attribution, and any effect on reported CPL or ROAS

- Timeframe: when I expect stabilization as the model retrains

One mild contradiction to sit with. Modeled data feels abstract, yet it often paints a truer picture of marketing than raw observed data alone. Cross-device behavior and privacy controls hide parts of the journey. With solid tagging and Consent Mode, modeling recovers those signals so budget decisions mirror reality.

By now, the concept should feel less mysterious. GA4 modeled conversions exist to keep measurement whole when privacy rules or tech limits blur the edges. That means my paid search CPL doesn’t spike just because Safari clipped a cookie window. My pipeline forecast doesn’t wobble when a consent banner ships. And my leadership team sees consistent, defensible numbers that match what sales is seeing on the ground.

.svg)