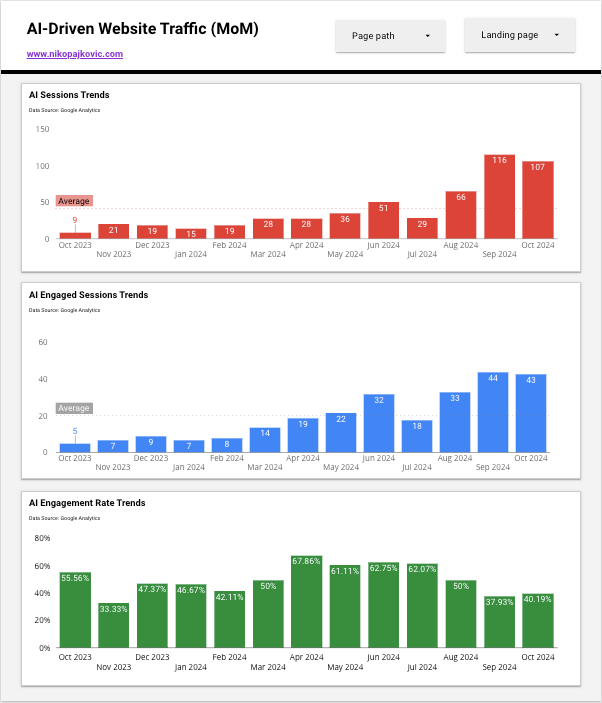

You’re probably already getting clicks from AI assistants without a clean paper trail. Some visits arrive with a tidy referrer; others sneak in as Direct. That mix feels messy, yet it’s fixable. Give me a few minutes and I’ll show how I confirm whether Perplexity AI and friends are sending leads, not just traffic.

Perplexity referral analytics

I treat Perplexity as a growing discovery channel. It cites sources, users click those sources, and pages get visits. The quick win is to confirm this with a fast GA4 diagnostic, then size the impact in terms a CEO cares about.

Two-minute diagnostic in GA4

- Path: Reports > Acquisition > Traffic acquisition

- Filter: Add a filter where Session source contains perplexity.ai OR www.perplexity.ai

- Columns: Add Secondary dimension Landing page + query string

- Metrics: Make sure the Conversions column is visible

- Caveat: I expect some dark AI traffic. Mobile in-app browsers and referrer policies (for example, rel="noreferrer") can mask the referrer, so a share from Perplexity may land as Direct

- Optional cross-check: Open User acquisition and check whether there are new users from Perplexity on the same dates

For another quick walkthrough, see Larry Engel.

Now I size the business impact. I scan these KPIs, simple and fast:

- Sessions and Engaged sessions to gauge volume and quality

- Conversions and Conversion rate to judge intent

- Pipeline value: Conversions × acceptance rate × average opportunity value. If I track lead stages, I show Perplexity-sourced opportunities next to other channels

- Example: 12 conversions × 40% acceptance × $15K average opportunity = $72K potential pipeline

What I look for:

- Short spikes tied to content updates or a fresh blog post

- A few hero pages that pull most of the clicks

- Conversion clusters on forms and calendar bookings

- Early signs of AI-generated traffic attribution across devices, with Direct filling in gaps

This keeps the conversation away from vague “AI traffic” and anchored in outcomes.

Is Perplexity AI sending me traffic?

Here’s the 6-step pass I run. It’s quick, repeatable, and executive-friendly.

- GA4 quick filter on Session source

Reports > Acquisition > Traffic acquisition. Filter Session source contains perplexity.ai or www.perplexity.ai. I save the comparison. - Add Landing page and Event name columns

I add the Secondary dimension Landing page + query string. In the table settings, I add Event name if I want to spot events like form_submit or begin_checkout. - Compare date ranges to spot growth

I use a 28-day vs previous 28-day comparison. I watch for step changes on days I shipped content or earned mentions. Sudden shifts often point to LLM referral analytics in action. - Segment by device

I add Device category. Mobile spikes may indicate in-app browsers where referrers sometimes get lost. - Check User acquisition for new users

Reports > Acquisition > User acquisition. Filter First user source contains perplexity.ai. Are these net-new users compared to my usual Direct and Organic mix? - Export to CSV for leadership

I export the table and add a simple summary row for sessions, engaged sessions, conversions, and CVR. Then I drop it into my weekly report.

Bonus clue

If the curve looks spiky and mirrors the content calendar, I’m seeing early AI discovery patterns. That’s normal and useful. It means the site is being found by answers engines, not only web search.

How do I know if Perplexity is citing my content?

I use two dependable ways to confirm citations and build an internal paper trail.

Method 1. In-product proof

- I run the target query in Perplexity

- I expand Sources in the answer

- If my domain appears, I click through to validate

- I save the share link and a quick screenshot for my internal notes

Method 2. Analytics proof

- GA4 Explorations

- I create a Free form exploration

- I add Dimensions Session source, Page referrer, and Landing page + query string

- Page referrer must be a custom dimension mapped to the event parameter page_referrer

- I filter Page referrer contains https://www.perplexity.ai or https://perplexity.ai/search

- I can now see full referrer URLs that point to specific answer pages

- PostHog

- I build an insight where $referrer or $initial_referrer contains perplexity.ai

- I list Top pages to check which content Perplexity prefers

- Add an alert

- In GA4, I create a Custom insight that triggers when sessions with Session source contains perplexity.ai exceed a daily threshold

- In PostHog, I use alerts on trends for the same condition

These alerts teach me which content gets cited, so I can expand those clusters without guesswork. They also strengthen AI chatbot traffic attribution in monthly reports.

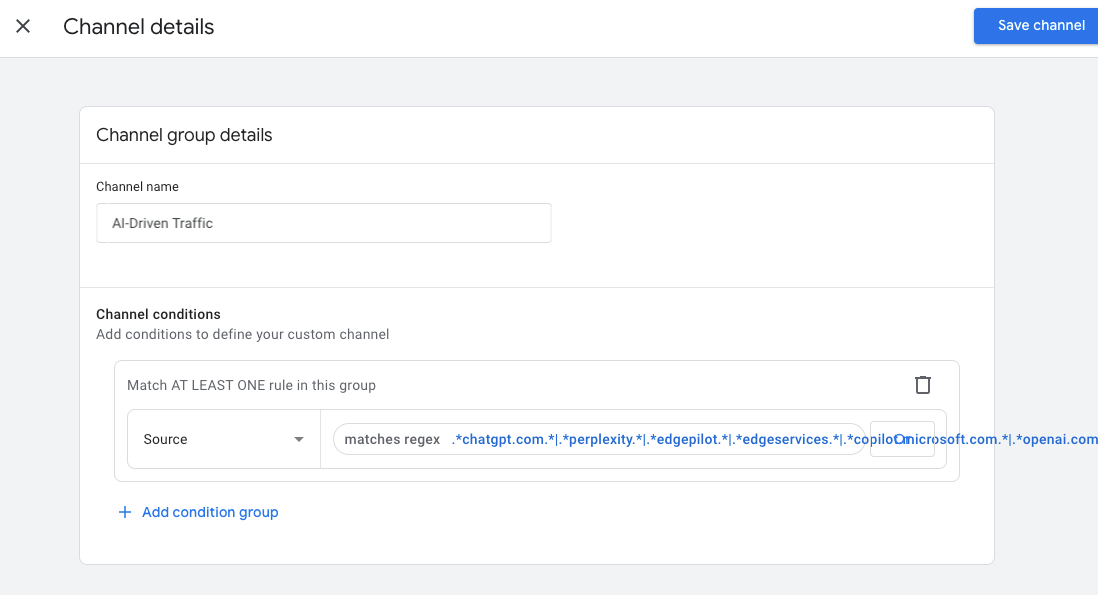

GA4 tracking for ChatGPT and Perplexity

When I want a clean data lane, I create an “AI Assistants” channel, pin the referrer details, and keep a reusable Exploration. This turns ad hoc checks into a working system.

Practical setup

- Create a Custom channel group

- GA4 path: Admin > Data settings (or Data display) > Channel groups

- Add a group named AI Assistants

- Rules: Source contains perplexity.ai OR chat.openai.com OR claude.ai OR gemini.google.com OR poe.com

- Save. Data starts populating after the group is created

- Add a custom dimension for full referrers

- GA4 path: Admin > Data display (or Configure) > Custom definitions

- New custom dimension. Name Page referrer. Scope Event. Event parameter page_referrer

- This lets me see specific Perplexity search URLs in Explorations

- Build an Exploration for weekly reporting

- Explore > Free form

- Dimensions: Session source / medium, Landing page + query string, Page referrer

- Metrics: Engaged sessions, Conversions, User conversion rate, Average engagement time

- Filter on Default channel group equals AI Assistants

- Create two audiences

- AI Assistants traffic: Condition Default channel group equals AI Assistants

- AI-sourced converted users: Start with the audience above, plus at least one conversion event like demo_submit or calendly_booked

- Label conversions that matter in B2B

- Common events include demo_submit, contact_form_submit, calendly_booked, pricing_view, and proposal_request

- Map them in Admin > Data display (or Configure) > Conversions

- Think about attribution

- GA4 uses data-driven attribution in standard reports, yet many execs still want last non-direct click

- I show both. For AI chatbot traffic attribution inside GA4, I compare AI Assistants in the Model comparison tool. I keep it simple and explain how referrers can be hidden on mobile, so numbers may be conservative

This structure removes the recurring scramble each time someone asks for the source of a spike.

ChatGPT referral tracking

ChatGPT shares links in two ways. Sometimes I get a clean referrer from chat.openai.com. Sometimes links use rel="noreferrer" or an in-app browser, so the session lands as Direct. I expect both.

Workarounds for ChatGPT link-click analytics

- Branded shortlinks with assistant-specific slugs

- I use go.brand.com or bit.ly. I point a shortlink like go.brand.com/ai-chatgpt to the target URL with UTMs, for example:

- utm_source=chatgpt

- utm_medium=ai_assistant

- utm_campaign=llm_referrals

- This forces source recognition when the link is shared in a prompt, a social post, or an email

- I use go.brand.com or bit.ly. I point a shortlink like go.brand.com/ai-chatgpt to the target URL with UTMs, for example:

- Pre-tagged “Copy link” in my content

- I add Copy link or Share buttons on key pages that auto-append UTMs

- I use variants for Perplexity, ChatGPT, Claude, and Gemini. Labels stay short and consistent

- A practical proxy for dark traffic

- In GA4, I create an Explore segment that includes Direct sessions landing on a known set of AI-favored posts and excludes returning users who usually come via Organic

- In Looker Studio, I create a calculated field that groups those landings as Probable LLM clicks. If you want a ready-made dashboard, copy this link.

- I document the assumption, show it as a separate row, and revisit monthly to limit false positives

I won’t catch every click, yet I’ll be far closer to the truth. That’s the goal.

Track referrals from AI assistants

GA4 is great for high-level reporting. PostHog shines when I want to understand flow and quality. I use them together.

PostHog setup that takes minutes

- Cohort

- Name: AI Assistants

- Rule: $referrer or $initial_referrer matches regex (perplexity.ai|chat.openai.com|claude.ai|gemini.google.com|poe.com)

- Trends

- I break down by $current_url to find top landing pages for the cohort

- I compare engagement with my Organic Search cohort for context

- Funnels

- Example: Landing page viewed → demo form submit → thank-you page

- I filter by the AI Assistants cohort to see drop-offs and true intent

- Paths

- I start from landing pages popular with AI. Do users scroll to pricing, jump to docs, or book a call?

- Session replay sampling

- I sample this cohort at a higher rate for two weeks and tag patterns I love and friction that hurts

- I make sure user consent and privacy settings are in place before enabling replays

Optional tools for more coverage

- Plausible or Matomo referrer reports if I want a second opinion

- Server or CDN logs to capture the Referer header with high fidelity

- For AI-generated traffic attribution, a log-based check can confirm whether Direct in GA4 was actually a masked in-app visit

A short digression. Quality matters more than volume. If AI cohorts scroll deeper, re-read, and click CTAs at higher rates than average, I have a source that deserves content and outreach focus. If they bounce, I fix the landing experience before chasing more clicks.

Perplexity AI traffic sources

Perplexity can show up under a few source patterns. I normalize them now so tracking stays tidy.

Include these in GA4 and PostHog

- perplexity.ai

- www.perplexity.ai

- labs.perplexity.ai

- Share links that start with https://www.perplexity.ai/search

- Early copilot or preview paths if I see them in logs. I save examples in the tracking doc

Regex I can paste

- Matches regex in GA4 or PostHog: perplexity\.ai|www\.perplexity\.ai|labs\.perplexity\.ai

Notes

- Mobile in-app browser clicks may show as Direct. I keep a Direct heuristic for known AI-favorite landing pages

- I audit referrers monthly. Perplexity ships updates, and new subdomains appear

- If I report on LLM referral analytics across tools, I keep a living allowlist. It saves hours during quarterly reviews

This looks fussy at first, yet once it’s in place, the data flows in clean lines.

UTM tagging for AI chatbot referrals

I can’t tag links I don’t control, like a random Perplexity citation. I can tag the links I share. When I standardize this, measurement gets easier and channel grouping stays clean.

A simple UTM taxonomy for B2B teams

- utm_source

- perplexity, chatgpt, claude, gemini

- utm_medium

- ai_assistant

- utm_campaign

- llm_referrals

- utm_content

- citation, share, snippet, outreach, community, doc

Where I deploy these UTMs

- Shortlinks for outreach and social posts; assistant-specific slugs like go.brand.com/perplexity-guide

- Copy snippet buttons on pillar pages; I pre-tag the URL so any shared link retains the source

- PR and media kits that include tagged links to the best explainers

- Partner enablement docs for communities where AI assistants pick up and share resources

Bring this into GA4

- I map AI Assistants in my Custom channel group so any of the above sources with medium ai_assistant roll into one channel

- I keep an Exceptions note for analysts: if a source is tagged but the referrer says something else, honor the UTM

Known limits and how I work around them

- I can’t force UTMs on third-party citations. I rely on referrer-based tracking plus my Direct heuristic on AI-heavy pages

- People paste untagged links. I rely on my Custom channel group and the Page referrer dimension to catch most of it

- For AI chatbot traffic attribution across reports, I keep both views: one table for referrer-derived traffic, one table for UTM-tagged shares. I reconcile totals monthly, note the gap, and move on

A brief note on messaging and UX. If AI assistants send curious readers, I meet them with context. I open with a crisp answer. I offer a next step that doesn’t ask for too much, too soon. If the product has a trial, I consider a short self-guided tour. If I sell higher-ticket services, a simple “Book a call” next to a few proof points will beat a wall of copy.

Here’s the playbook I use: I check the referrers fast. I size impact with a short KPI checklist. I build one GA4 Exploration and one PostHog view. I normalize source patterns. I standardize UTMs for the links I control. I repeat weekly. As Perplexity, ChatGPT, and friends grow, the pipeline reporting stays clear, boring, and reliable - which is exactly what an exec team wants.

.svg)