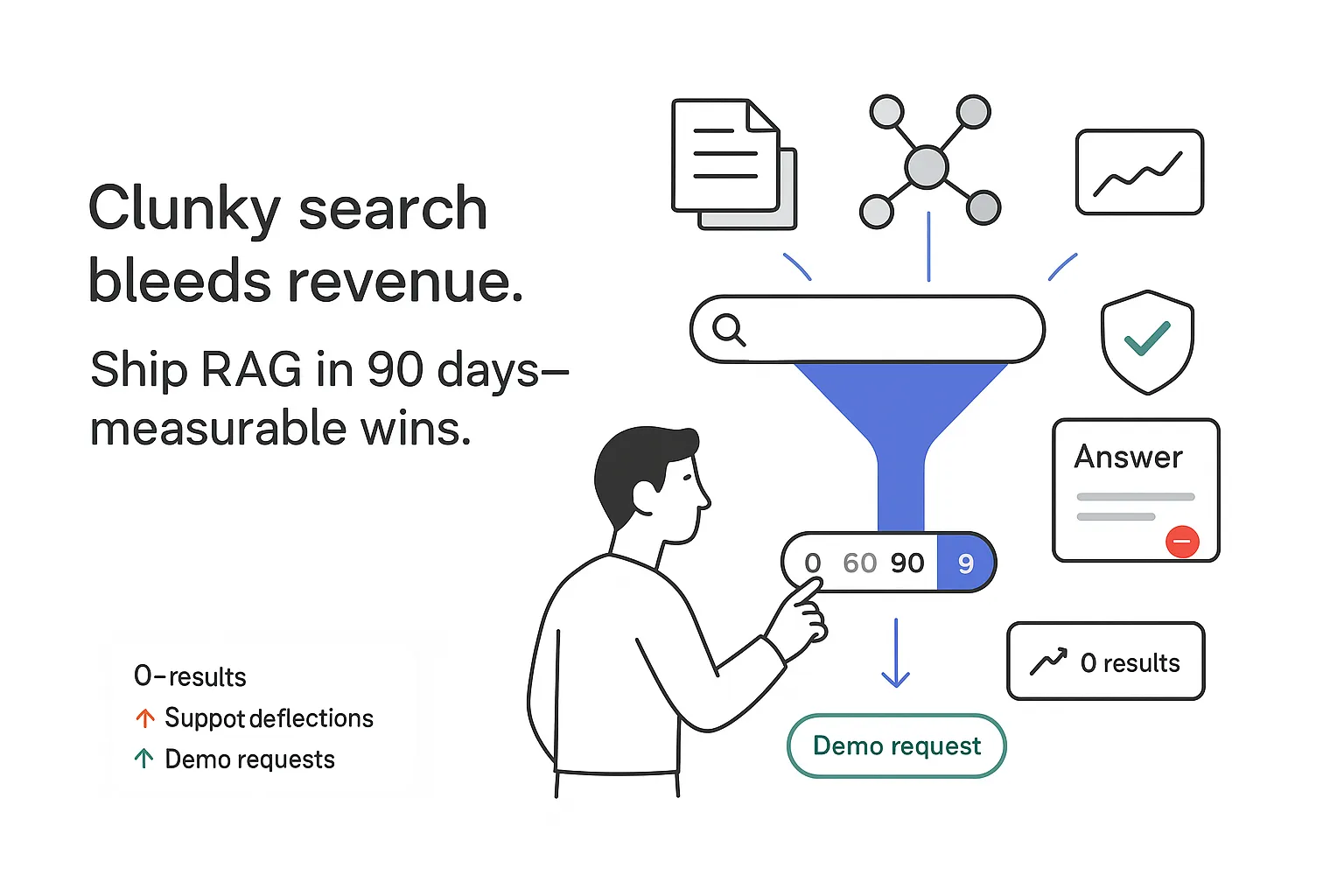

If your site search feels like a black box, you are not alone. I see powerful B2B service content get buried behind clunky search, confusing filters, and vague answers. That is a shame, because site search can pull its weight on the P&L when it is instrumented and governed. Faster time to answer reduces support tickets. Better relevance nudges more qualified form fills. Clear answers keep visitors engaged instead of bouncing to a competitor. The good news is simple: with a focused upgrade to retrieval augmented generation (RAG) for website search, I can turn search into a measurable growth lever without tearing out the existing stack.

Site search optimization strategies with retrieval augmented generation for website search

I start with outcomes. CEOs care about numbers, so I anchor goals to metrics:

- Faster answers in search results cut support load through self-service. Expect a drop in ticket volume and agent handle time when answers are grounded and easy to skim. The magnitude depends on baseline content quality and routing.

- Stronger relevance increases lead form conversions. I look for lifts in demo requests or consultation bookings from search-heavy sessions once intent coverage improves and answers cite high-authority sources.

- Cleaner navigation reduces bounces. With clearer snippets and better ranking, I expect higher time on page, more internal clicks, and deeper content consumption.

A practical 30/60/90-day plan

- Days 1 to 30: I mine query logs to identify the top 50 intents that drive value. I map each intent to the best pages already live, including service pages, PDFs, playbooks, and case studies. I fix obvious synonym gaps and misspellings. I set baselines such as zero-result rate, click-through rate, Success at 1, and search-assisted conversion rate so I can measure lift rather than guess.

- Days 31 to 60: I bring RAG to the high-value intents. For those priority queries, I retrieve the right passages, then synthesize short answers with citations. I keep the original ranked results visible below. I run A/B tests against the baseline to quantify impact.

- Days 61 to 90: I expand coverage across more intents, tune re-ranking, and tackle long-tail queries. I add guardrails, confidentiality checks, and throttling. I document what worked, what did not, and where the next wins sit.

Quick wins I prioritize

- Synonym mapping for industry terms, acronyms, and branded language. Tie SOC 2 to Service Organization Control. Tie MDR to managed detection and response. Map regional spellings and common typos.

- Zero-results rescue. If recall fails, I fall back to a looser semantic match, then a sitewide keyword search, then a helpful message with next steps.

- No-answer fallbacks. If the system is not confident, I show the best three pages, a crisp FAQ snippet, and a clear path to contact or chat. It is better to admit uncertainty than guess.

Grounding is the rule, not a nice-to-have

Hallucinations are expensive. The fix is grounding LLM answers with site content. I retrieve the right chunks from the site, cite them, and keep generation inside those boundaries. This reduces risk, keeps answers current, and builds trust.

A note on differentiation

Many vendor pages talk theory. I have found that showing measurable wins and a phased rollout stands out. If the first 90 days produce lower zero-results, higher click-through, and a lift in search-assisted conversions, buy-in for the next wave follows naturally.

What is retrieval augmented generation for website search?

My one-line definition for site search: retrieve the right passages from your pages, ground the LLM with citations, and generate a safe, concise answer directly in the results. For background, see Retrieval-Augmented Generation (RAG).

RAG does not replace traditional search; it complements it. I still rank documents, but I also synthesize a short answer for the user, linked to sources. The result feels like an expert assistant that knows the site.

Core components to get right

- Content index: a clean, deduplicated catalog of pages, PDFs, and help articles.

- Embeddings: vector representations of chunks that capture meaning.

- Retriever: fast recall against vectors, with filters for service line, industry, region, or persona.

- Re-ranker: a precise scorer that reorders candidates based on the query.

- Generator: the LLM that writes the answer and cites sources.

- Guardrails: safety filters, policy enforcement, and no-answer detection.

- Analytics: offline labels and online telemetry to tune relevance, faithfulness, and speed.

B2B specifics worth calling out

- Gated PDFs are common. I support authenticated recall and permission checks so private content never leaks.

- Service pages and case studies carry the pitch. I treat them as high-authority sources in re-ranking.

- Compliance content changes quickly. I add freshness signals so new policies and dates outrank older ones.

- Prerequisite: RAG cannot fix thin or outdated content. If coverage is weak, I address content gaps first.

Quick contrast with retail-style semantic search

Product search leans on attributes like brand, size, and availability. B2B content search leans on long-form thought leadership, policy language, and technical service detail. That shift puts more pressure on chunking, re-ranking, and grounding.

How it works: vector search implementation for site search

The request flow, tuned for websites

- The user query arrives. I keep the original version and run a light rewrite if needed - spelling fixes and careful expansion of acronyms, not wild paraphrases.

- I create a query embedding with a sentence embedding model that has been tested on the content type.

- Vector recall runs first, filtered by metadata such as service, industry, region, and language. I return the top K chunks.

- Lexical recall runs in parallel using BM25 against titles, headings, and body text to capture rare terms and exact matches.

- I merge and re-rank candidates with a cross-encoder that scores query-text pairs precisely.

- I synthesize a short answer with clear citations, limiting claims to content from the selected chunks and inserting links.

- I apply safety checks and policy filters. If confidence is low or policy is triggered, I show a no-answer block with the best documents below.

- I cache the result so similar queries or the same user session feel instant.

Key tech choices and targets

- Embedding model: I pick a multilingual or English-focused model that handles technical language and evaluate it on an offline set before rollout.

- Vector store: I choose a low-latency index with HNSW or IVF and filter support, aiming for retrieval latency under 500 to 800 ms at peak.

- Re-ranker: a cross-encoder or hosted rerank API that boosts precision at the top of the list.

- Generator: an LLM that writes concisely and respects citations. I keep context short and focused to control cost.

- Authorization handling: I respect robots rules, honor login state, and block recall of gated assets when needed.

- Streaming generation is helpful: the first tokens appear quickly while the rest completes in the background.

Ops tips that keep things smooth

- I warm caches on common queries and precompute embeddings for hot pages.

- I run background embedding jobs nightly and process daily deltas from the CMS.

- I store a small history per user or session so reformulations run faster and feel smarter.

Content indexing and chunking for RAG

Clean data is the quiet unlock. A tidy index beats a noisy one every time.

I ingest sources from the CMS, PDFs, knowledge base, and help center. I normalize HTML by stripping navigation, footers, and cookie banners, and I keep headings and anchor links. I canonicalize URLs and dedupe near duplicates so the same paragraph does not get indexed five times.

I chunk by meaning, not strict length. A common sweet spot is 200 to 400 tokens with 10 to 20 percent overlap. I keep the section header in metadata so I can show it in citations. I tag content with schema such as service type, industry, geo, persona, publish date, and last updated. Those tags power filtered retrieval and let me prioritize fresh or authoritative chunks.

Update strategy

- Webhooks on publish push new pages into a priority queue.

- I recalculate embeddings only for changed chunks to save time and cost.

- I flag hot content like incident notes or pricing pages so they reindex first.

For retail catalogs you might index attribute vectors. For a B2B service site, narrative chunks that keep context, diagrams, and policy language connected tend to perform better.

Hybrid search: BM25 plus vectors

Why hybrid search works

Vectors catch intent and meaning. BM25 catches rare acronyms and exact matches. You need both, or you will miss one set of queries.

Fusion options that are easy to tune

- Reciprocal Rank Fusion blending ranked lists from both retrievers.

- Weighted score blending with separate weights for vector and BM25 scores.

- Staged recall where BM25 runs first for exact hits and vectors expand the candidate set for re-ranking.

I add typo tolerance, stemming, and synonym dictionaries to make recall forgiving. I expose tunable knobs such as K for each retriever, per-field boosts for titles or H2s, and separate weights for body text and metadata.

Query understanding and re-ranking in site search

Start by classifying the intent

- Navigational queries aim for a specific page such as Pricing or a SOC 2 guide.

- Informational queries ask for concepts or methods such as MDR vs MSSP.

- Transactional queries seek an action such as booking a consultation.

I detect entities like industry, compliance framework, or region. I expand acronyms carefully. I use LLM rewrites sparingly and keep the original query as a candidate to avoid drift.

Re-rank for precision at the top

I use a cross-encoder or a hosted rerank API to score candidates. I add business rules that boost case studies and updated service pages and downweight content older than a set threshold. If the system cannot answer with confidence, I trigger no-answer behavior and show the top documents with a short explanation and a clear contact path.

Site search evaluation and relevance tuning

I treat search like a product and run it with a scoreboard. I build an offline evaluation set from top site queries with human-labeled relevant URLs. I track NDCG@10, Success@1, Recall@k, and MRR so I can compare changes safely.

For generated answers, I measure faithfulness and citation coverage. A short answer should stick to retrieved content and cite it. LLM-as-judge can score for coherence and groundedness, but I keep human spot checks in the loop. On the live side, I track click-through rate, scroll depth, zero-result rate, reformulation rate, search-assisted conversions, and support deflection.

What to tune and how

- Chunk size and overlap

- TopK values for vector and BM25 recall

- Fusion weights and field boosts

- Embedding and re-ranker models

- Answer length, citation format, and confidence thresholds

I run A/B tests with enough traffic to get stable results. I add user feedback loops (thumbs up or down with citation capture) and pipe that signal back into training and rules. Over time, the system feels smarter because it learns from real behavior, not just lab data.

Competitor gap worth closing

Many guides talk about the why and skip the how. The edge comes from a practical scorecard and steady iteration. When I can show a lift chart, the conversation moves from theory to results.

RAG for enterprise site search

Mapping products and services to a vendor-neutral stack

- Embeddings: OpenAI text-embedding models, Cohere embed, Vertex text-embedding, AWS Titan embeddings, or open source models like BGE.

- Vector stores: Pinecone, Weaviate, Elasticsearch or OpenSearch kNN, Vespa, Milvus, or pgvector for Postgres.

- Re-rankers: cross-encoders such as MS MARCO-trained models, or Cohere Rerank.

- LLMs: GPT-4 or 4.1, Claude 3 family, Gemini, Mistral, or Llama 3 for private setups.

- Orchestration: LangChain, LlamaIndex, or light custom code for clarity and control.

- Observability and eval: Arize Phoenix, WhyLabs, Promptfoo, DeepEval, and OpenTelemetry traces.

- Safety and privacy: PII redaction, content filtering, allow lists, and per-tenant keys.

- Caching and delivery: CDN or edge cache for static assets and cached answer snippets when policy allows.

Enterprise concerns that matter on day one

- Auth-aware retrieval respects user roles and permissions.

- Data residency and encryption at rest satisfy auditors and regional requirements.

- Audit logs record what was retrieved, re-ranked, and shown.

- Rate limit handling and retries protect upstream services.

- SLOs define latency and uptime, tied to clear escalation paths.

- Cost control via cached prompts, shorter contexts, selective generation, and model routing.

Reference deployment patterns

- Managed SaaS for teams that want speed, less ops overhead, and vendor support. Good for standard content and moderate scale.

- Self-hosted for teams that need tight control, custom privacy rules, and deep integration with internal systems.

- A hybrid model where public content runs on a managed stack and private content runs behind your firewall with federated queries.

A governance layer helps. I define who can change synonyms, weights, and rules. I limit who can add new sources. I keep a staging environment for trials and a simple approval workflow so experiments do not break production.

Seasonal and trend notes

Search behavior shifts during budget season, compliance deadlines, and after new regulations land. I plan small, focused indexing jobs around those cycles. Support teams will thank you when contact volumes spike.

Grounding that builds trust, again

It bears repeating: grounding LLM answers with site content is not only safer, it is persuasive. When a prospect sees a concise answer and a reliable citation from a service page or a recent case study, the next click is natural. Over time, I see fewer reformulations, longer sessions, and more goal completions from search-led journeys.

A short reminder on measurement

I run weekly reviews of the metrics set in month one. I watch zero-results, Success@1, and support deflection. I pull a handful of live answers, read them like a buyer would, and ask one question: would I trust this? If the honest answer is yes most of the time, site search is finally pulling its weight. If not, the roadmap above makes the next tuning steps clear.

.svg)