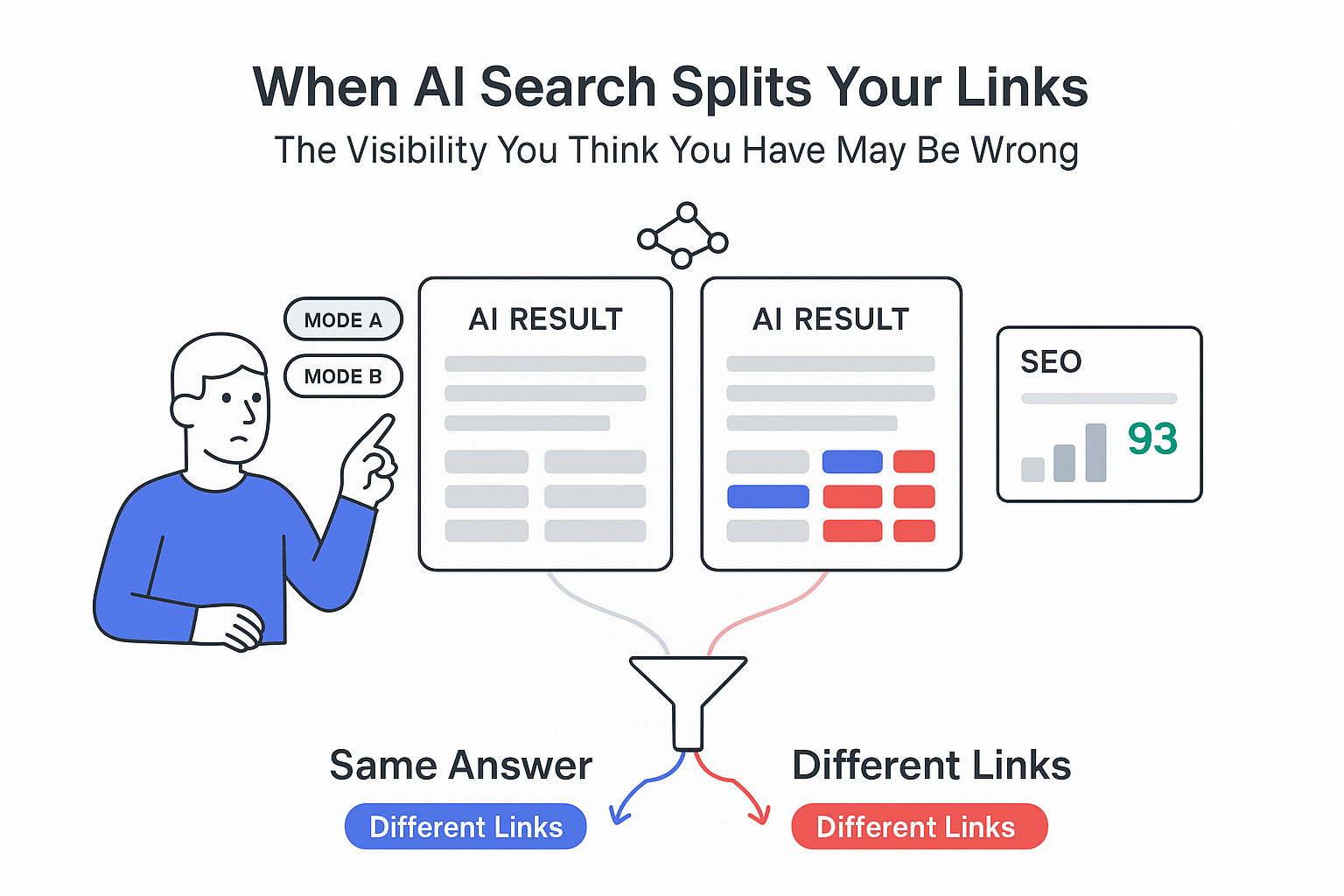

Google's experimental AI search experiences now show measurable differences in how they cite sources, even when their answers carry nearly the same meaning. New September 2025 data from Ahrefs' Brand Radar provides one of the first large-scale looks at how often Google AI Mode and AI Overviews agree on content but disagree on URLs.

Google AI Mode and AI Overviews - Executive Snapshot

- AI Mode and AI Overviews cited at least one identical URL for the same query only 13.7% of the time; overlap across the top three citations rose slightly to 16%.[S1]

- Despite low URL overlap, responses were highly aligned in meaning - average semantic similarity was 0.86 on a 0-1 scale, with 89% of pairs above 0.8.[S1]

- Text overlap was modest: only 16% overlap in unique words, and just 2.5% of response pairs shared the same first sentence.[S1]

- AI Mode answers were roughly 4× longer, mentioning a mean 3.3 entities per response vs 1.3 for AI Overviews; AI Mode included all entities from the Overview 61% of the time and added more.[S1]

- AI Overviews leaned more on video and core pages (for example, homepages) and cited YouTube most often; AI Mode cited Wikipedia more, plus about 3.5× more Quora and roughly 2× more health sites than AI Overviews.[S1]

Implication for marketers: Treat AI Mode and AI Overviews as separate surfaces with distinct citation and content patterns, rather than assuming success in one will carry over to the other.

Study methods and source notes on Google AI search features

Ahrefs' analysis, published on the Ahrefs blog and powered by its Brand Radar tool, examined how Google's AI Mode and AI Overviews respond to the same queries in the U.S. market.[S1] The dataset covered:

- Timeframe - September 2025 U.S. search data.[S1]

- Sample size - 730,000 query-response pairs for content similarity analysis and 540,000 query-response pairs for citation and URL overlap analysis.[S1] Each "pair" is the AI Mode and AI Overview output for the same query.

-

Metrics used:

- URL overlap (any shared citation, and overlap within the top three cited URLs).[S1]

- Lexical similarity (overlap in unique words and match rates for the opening sentence).[S1]

- Semantic similarity scores on a 0-1 scale, where 1.0 indicates identical meaning; 0.8 was used as a "high similarity" threshold.[S1]

- Structural and content features such as answer length, number of entities/persons/brands mentioned, and presence or absence of citations.[S1]

- Domain and content-type patterns across citations (for example, Wikipedia, YouTube, Quora, health sites, core pages vs articles).[S1]

A related research study from Ahrefs, based on repeated AI Overview generations, found that 45.5% of citations changed between updates, highlighting how volatile citations can be over time.[S4]

Google's own AI search documentation notes that AI Mode and AI Overviews may use query "fan-out" - multiple related searches across subtopics and data sources - and may rely on different models and techniques, which can yield different links and wording for the same query.[S3]

Key limitations and caveats:

- The analysis reflects a single generation from each surface per query; it does not capture variability across repeated runs for the same query.[S1][S4]

- The dataset is U.S.-only; behavior may differ in other locales or languages.[S1]

- Brand Radar coverage likely focuses on queries of commercial or informational interest to SEOs and brands, rather than a random sample of all Google searches. This can skew topic distribution (Interpretation - Tentative).

- Semantic similarity scores are reported, but the technical implementation (for example, model used for embeddings) is not detailed in the summary text provided (Interpretation - Tentative).

Findings on URL overlap, semantic similarity, and source patterns

Ahrefs' data paints a consistent picture: AI Mode and AI Overviews usually arrive at similar answers but choose different wording and different sources to express them.

URL citation overlap is low

- Across 540,000 query pairs, AI Mode and AI Overviews shared at least one identical URL only 13.7% of the time.[S1]

- Restricting the comparison to the top three citations on each surface, URL overlap increased but remained modest at 16%.[S1]

- This means that in roughly 84-86% of cases, a site cited in one experience was not also cited in the other for the same query.

High semantic match despite low text and URL overlap

- Average semantic similarity between AI Mode and AI Overview answers was 0.86 on a 0-1 scale.[S1]

- 89% of response pairs scored above 0.8, which Ahrefs interprets as "high semantic similarity" - essentially aligning on meaning even when the phrasing differed.[S1]

- Lexical overlap was limited: only 16% of unique words were shared between the two answers on average, and only 2.5% of response pairs had an identical first sentence.[S1]

9 out of 10 times, AI Mode and AI Overview agreed on what to say. They just said it differently and cited different sources.[S1]

Different source and content-type preferences

- Wikipedia appeared in 28.9% of AI Mode citations vs 18.1% in AI Overviews, indicating heavier reliance on this reference source in AI Mode.[S1]

- AI Mode cited Quora 3.5× more often and health sites at roughly double the rate of AI Overviews.[S1]

- AI Overviews leaned strongly into video content:

- YouTube was the most frequently cited domain for AI Overviews.[S1]

- AI Overviews cited videos and core pages (for example, homepages) nearly twice as often as AI Mode.[S1]

- Reddit was cited at similar rates in both features.[S1]

- Across both AI Mode and AI Overviews, there was a clear preference for article-format pages over other formats.[S1]

Response length and entity/brand coverage

- AI Mode responses were about 4× longer on average than AI Overview responses.[S1]

- Entity mentions followed that pattern:

- AI Mode responses mentioned an average of 3.3 entities per answer.

- AI Overviews averaged 1.3 entities per answer.[S1]

- In around 61% of query pairs, AI Mode included all entities mentioned in the AI Overview answer and then added additional entities.[S1]

- Many answers contained no personal names or brands at all:

- 59.41% of AI Overview responses had no person or brand mentions.

- 34.66% of AI Mode responses had no person or brand mentions.[S1]

- Ahrefs links this to informational queries where named entities are not central to the answer.[S1]

Citation gaps and no-source answers

- Only 3% of AI Mode responses lacked any citations, compared with 11% of AI Overview responses.[S1]

- Ahrefs reports that missing citations typically appeared in cases such as:

- Pure calculations

- Sensitive topics

- Situations where users were redirected to help centers

- Unsupported languages or contexts where Google declined to show sources[S1]

- Within the observed dataset, AI Mode is more likely to display at least one source link than AI Overviews.

Google documentation context

- Google's AI search documentation states that AI Overviews and AI Mode can both use query fan-out, issuing multiple related searches that pull from different subtopics and data sources while generating a single answer.[S3]

- The same documentation notes that different models and techniques may power AI Overviews and AI Mode, which can lead to variation across responses and citations for the same query.[S3]

- Ahrefs' separate study showing 45.5% citation change across AI Overview updates underscores that not only are AI Mode and AI Overviews different from each other, but each one also shifts its citations over time.[S4]

Interpretation and implications for SEO and marketing (clearly labeled)

1. Visibility in AI Mode and AI Overviews should be measured separately (Likely)

Given only 13.7% URL overlap and 16% overlap when looking at top three citations, appearing in one surface does not strongly predict appearing in the other for the same query.[S1] From a measurement standpoint, AI Mode and AI Overviews behave more like two distinct discovery surfaces than minor variants of one result block.

Implication (Likely):

- Reporting that rolls "AI search visibility" into a single metric can hide important differences. Where possible, tracking should distinguish:

- Citations and mentions in AI Overviews

- Citations and mentions in AI Mode

- Brands that appear in one and not the other may have different content strengths (for example, strong video presence vs strong article or reference content).

2. Content meaning matters more than wording match, but sourcing is often re-decided (Likely)

High semantic similarity (0.86 average; 89% above 0.8) alongside low lexical overlap and low URL overlap suggests that Google's models converge on similar answers while independently choosing phrasing and supporting sources.[S1]

Implications (Likely):

- Optimizing for exact phrasing used in one AI surface is unlikely to guarantee reuse in the other.

- Underlying topic coverage, factual accuracy, and authority signals likely matter more than echoing AI wording (Interpretation - Tentative, based on general understanding of generative ranking behavior).

- Even if your page is a good answer, the system may still rotate among multiple viable sources across surfaces and over time, as suggested by the 45.5% citation change rate in AI Overviews.[S4]

3. AI Mode appears more "research-heavy" and entity-dense; AI Overviews more "result-light" and video-oriented (Likely)

AI Mode answers are longer, include more entities, and are more likely to include at least one citation; AI Overviews are shorter, with fewer entities and more no-source cases.[S1]

Implications (Likely):

- Brand exposure: AI Mode seems more likely to surface additional entities - including competitors - around a given topic. A mention there might carry higher risk of adjacent competitor mentions than a shorter Overview answer (Interpretation - Tentative).

- Content mix: AI Overviews' higher reliance on YouTube and other video or core pages signals that video presence and strong, concise landing pages may contribute more to visibility there, while detailed articles and reference-style content may be more often picked up by AI Mode.[S1]

- Messaging control: Shorter AI Overviews with fewer entities may compress brand differentiation, while longer AI Mode responses may provide room for more granular distinctions between solutions and features (Interpretation - Tentative).

4. Wikipedia, Quora, Reddit, and vertical sites play different roles by surface (Likely)

Heavier Wikipedia and Quora usage in AI Mode and higher YouTube and video use in AI Overviews indicate that each surface draws from different blends of UGC, reference, and multimedia content.[S1]

Implications (Likely):

- For topics where Wikipedia or major reference sites dominate, AI Mode may be harder to penetrate with brand-owned content than AI Overviews, unless a brand owns key reference-style assets.

- For topics with strong YouTube presence or where queries map well to how-to or explainer videos, AI Overviews may reward investment in well-structured video channels and video SEO more directly than AI Mode.[S1]

- With Reddit cited similarly in both, UGC can influence both surfaces; monitoring conversation and ensuring accurate information in relevant threads retains importance (Interpretation - Tentative).

5. Many answers have no brand or person mentions, reducing direct commercial visibility (Likely)

More than half of AI Overview answers and over one-third of AI Mode answers contained no person or brand mentions at all.[S1]

Implications (Likely):

- For many informational queries, these AI features may resolve user intent without clearly crediting brands, even when they draw on brand-owned content behind the scenes.

- This reduces the direct brand-building effect of AI search for those queries, compared with traditional blue links where brand names are always visible in titles and URLs (Interpretation - Tentative).

- When brands are mentioned, it is within a relatively small subset of queries, which makes surfacing in those entity-heavy answers especially important for awareness and consideration.

6. No-citation answers complicate attribution and trust signals (Likely)

With 11% of AI Overview answers and 3% of AI Mode answers lacking citations, some users will see AI-generated content without any visible source.[S1]

Implications (Likely):

- From a brand standpoint, there is no direct path from such answers to owned properties, which makes it harder to link AI-assisted visibility to traffic or conversions.

- In sensitive or regulated categories where Google withholds citations, organic influence may shift more strongly toward source material that trains the model, even though it is not directly credited (Interpretation - Speculative; training data sources are not disclosed).

- For measurement, this supports segmenting AI search impressions into "with sources" and "no sources," as their value for referral and brand exposure differs.

Contradictions and gaps in the current data

Snapshot vs dynamic system

- Ahrefs' main study relies on single generations per query, yet its own separate research shows 45.5% of AI Overview citations change when the Overview updates.[S4]

- This means the observed 13.7% URL overlap between AI Mode and AI Overviews may expand or shrink across runs, and any ranking of "top AI surfaces for our brand" is inherently unstable (Interpretation - Likely).

Semantic similarity vs UX similarity

- The high semantic similarity score suggests that users often get functionally similar answers regardless of surface.[S1]

- However, shorter AI Overviews, different source mixes, and higher video inclusion may significantly change user perception and click behavior compared with longer AI Mode answers (Interpretation - Tentative, because click data is not included in Ahrefs' report).

Missing dimensions: traffic, CTR, and revenue impact

- The Ahrefs analysis focuses on content and citation patterns, not on user engagement metrics such as click-through rate, dwell time, or downstream conversions.[S1]

- Businesses still lack data that connects AI Mode and AI Overview presence to measurable commercial outcomes - a key gap for budgeting and channel planning (Interpretation - Likely).

Population and topic bias

- The dataset is U.S.-only and built from queries monitored in Ahrefs' Brand Radar, which are likely skewed toward SEO- and brand-relevant keywords.[S1]

- Behavior for low-volume, long-tail, or non-commercial queries, and for non-U.S. markets, remains largely unquantified (Interpretation - Tentative).

Limited transparency into model differences

- Google acknowledges that AI Mode and AI Overviews may use different models and techniques and can apply query fan-out, but does not disclose ranking logic for citations or how these systems share or separate underlying components.[S3]

- As a result, marketers and SEOs know what differs (URLs, source types, length) but not exactly why those choices are made, which constrains precise optimization (Interpretation - Likely).

Data appendix - key numbers at a glance

Sample and scope

- 730,000 AI Mode vs AI Overview query pairs analyzed for content similarity.[S1]

- 540,000 query pairs analyzed for URL and citation overlap.[S1]

- Geography: United States.[S1]

- Timeframe: September 2025.[S1]

Overlap and similarity

- URL overlap (any shared citation): 13.7% of query pairs.[S1]

- URL overlap (within top three citations): 16%.[S1]

- Average semantic similarity: 0.86 (0-1 scale).[S1]

- Share of pairs with semantic similarity > 0.8: 89%.[S1]

- Unique word overlap: 16% on average.[S1]

- Identical first sentence across both responses: 2.5% of pairs.[S1]

Entities, brands, and citations

- Mean entities per answer: 3.3 for AI Mode; 1.3 for AI Overviews.[S1]

- Cases where AI Mode includes all Overview entities and more: 61% of pairs.[S1]

- Responses with no person or brand mentions: 59.41% (AI Overviews); 34.66% (AI Mode).[S1]

- Responses with no citations: 11% (AI Overviews); 3% (AI Mode).[S1]

Source mix

- Wikipedia share of citations: 28.9% in AI Mode; 18.1% in AI Overviews.[S1]

- Quora: cited about 3.5× more in AI Mode than in AI Overviews.[S1]

- Health sites: cited at about 2× the rate in AI Mode vs AI Overviews.[S1]

- AI Overviews:

- YouTube is the most cited domain.[S1]

- Videos and core pages are cited nearly 2× as often as in AI Mode.[S1]

- Both surfaces: strong preference for article-format pages.[S1]

Volatility (from related study)

- In a separate Ahrefs analysis, 45.5% of AI Overview citations changed when the Overview updated, showing high volatility even within one surface.[S4]

Sources

- [S1] Ahrefs, "AI Overviews vs AI Mode," Brand Radar-based analysis of U.S. queries, September 2025 dataset, as summarized in the provided text and published on the Ahrefs blog.

- [S3] Google Search Central, "AI-powered features in Search" documentation on AI Overviews, AI Mode, query fan-out, and model variation, as summarized in the provided text.

- [S4] Ahrefs, related research on AI Overview citation changes over time ("AI Overview Change"), as summarized in the provided text.

.svg)