If I see a page that used to bring in qualified leads slipping a little each month, I do not treat it as random noise. I read that slow drift as content decay: softer rankings, fewer clicks, weaker engagement, and then, a bit later, fewer calls, booked meetings, or demo requests.

For B2B service firms, that decline gets expensive quickly because organic search often supports the pipeline long before sales feels the gap. Most pages do not collapse on day one. They lose ten clicks here, eight there, and then settle just outside the results where most traffic lives. That is why I treat decay as something to watch with a calm, repeatable process, not panic.

What Is Content Decay?

I define content decay as the gradual decline in a page’s organic performance over time. Performance is more than rank. It includes impressions, clicks, CTR, engagement, conversions, and the page’s ability to attract the right visitors in the first place.

I do not assume there is only one cause. A page can age, the query can change, another page on the same site can start stealing impressions, and a tracking problem can make the loss look worse than it is. I treat content decay as a diagnosis, not a guess.

A simple blog example makes it obvious. A post on “CRM onboarding tips for 2024” can perform well for a while, then slip as tools change, examples age, and searchers start looking for AI workflow ideas instead of basic setup advice. Traffic slides. That is content decay.

The same thing happens on service pages. A page for “fractional CFO services” may still rank on page one, yet lead volume falls because the copy is vague, proof points are dated, pricing language is absent, and the page no longer answers the questions buyers use when they validate vendors online before talking to sales. Rankings can look fine while business value drops. I still count that as decay.

How to Identify Content Decay

When I diagnose content decay, I start where the pain shows up first: traffic, rankings, or lead volume. If a page that once brought in form fills or booked calls is now flat or falling, I compare the last 90 days with the previous 90 days in Google Search Console. Then I check the same page in GA4 for organic conversions. I am not looking for traffic decline on its own. I want the pattern behind the drop.

| Metric | What I compare | What a drop may mean |

|---|---|---|

| Clicks | Last 90 days vs. previous 90 days | Lower demand, weaker rankings, or weaker CTR |

| Impressions | Same date range | Loss of visibility or a shift in search demand |

| Average position | Same date range | Declining rankings for core queries |

| CTR | Same date range | The title or description no longer matches what searchers expect |

| Query mix | Top queries by page | User intent may have shifted |

| Publish date | Original date vs. last meaningful update | Older pages may need a refresh |

| Conversions in GA4 | Organic landing page conversions | Lead value may be dropping even if traffic looks stable |

If clicks are down but impressions are flat, I usually look at CTR first. The title may no longer reflect what searchers want from the result. If impressions fall first, visibility is often the issue. If traffic and conversions both fall, the page is no longer pulling its weight.

Service pages can be trickier. Sometimes a page holds a decent rank but brings in fewer sales conversations because it still speaks to an older set of buyer questions. In those cases, I often see weaker engagement and conversion rates before I see a sharp ranking drop.

| Pattern | What it usually looks like | What I check first |

|---|---|---|

| Content decay | Slow drop across weeks or months | Query trends, CTR, intent match, page age |

| Seasonality | Similar rise and fall at the same time each year | Year-over-year data and the sales calendar |

| Technical issue | Sharp drop after a deploy, migration, or tracking change | Indexing, crawl errors, canonicals, analytics tags |

Some of the best recovery candidates sit between positions 4 and 15. They are close enough to win back, but far enough down to lose a meaningful share of clicks.

The Content Lifecycle

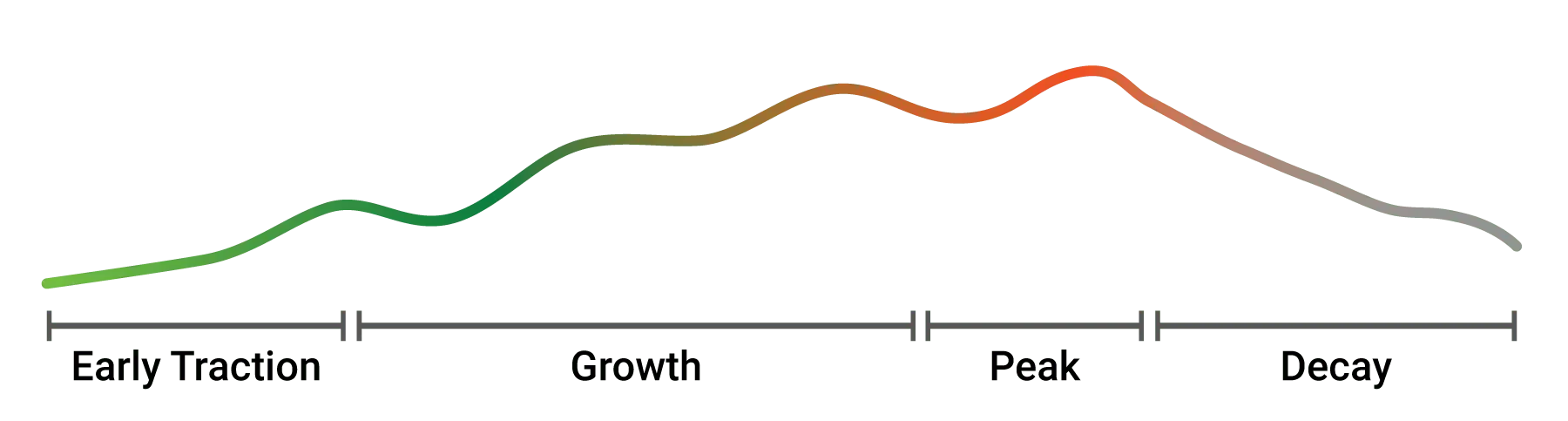

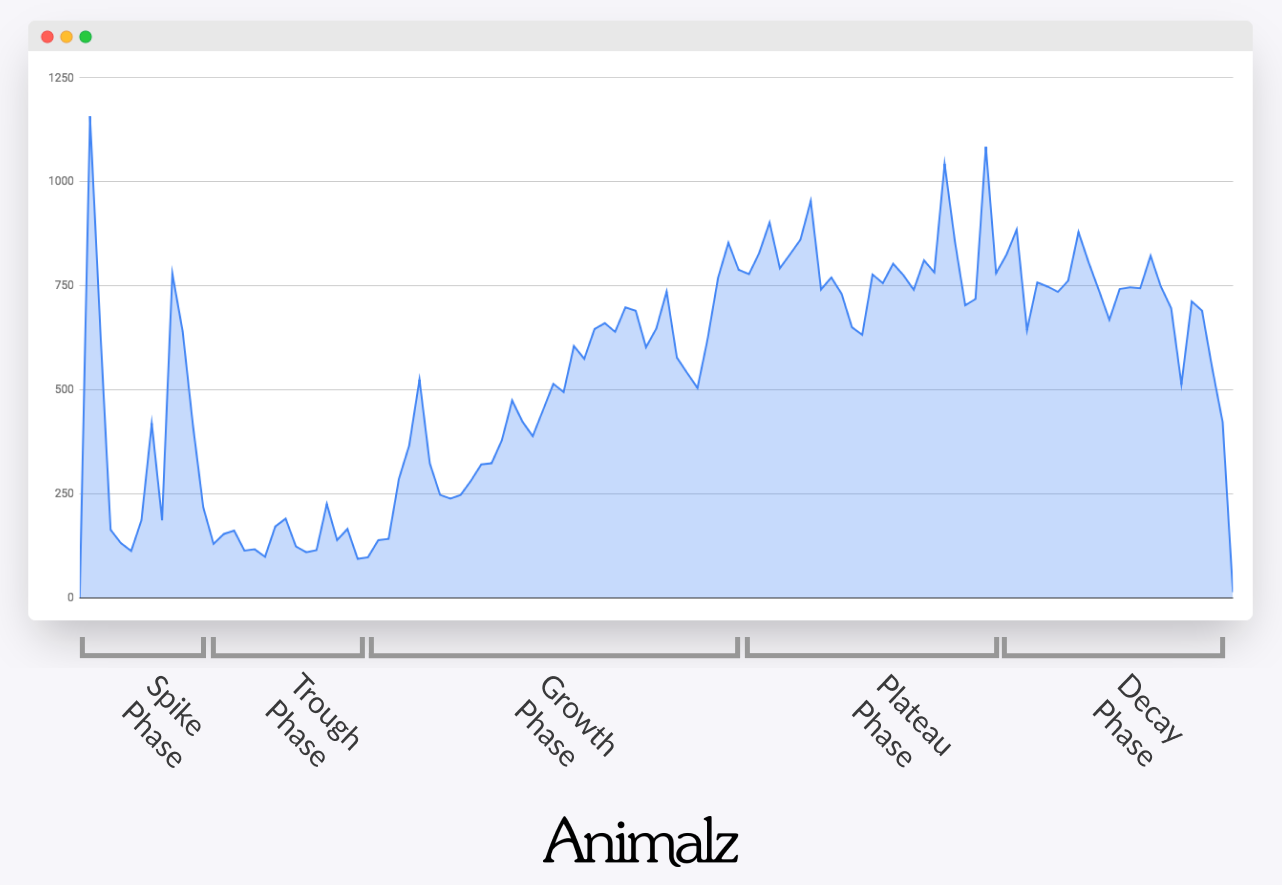

I think every strong page moves through a recognizable lifecycle. The timing varies, and some pages stay near the top for years, but the pattern is common enough to plan around. Animalz’s 2018 study is still a useful way to visualize how pages launch, grow, peak, decline, and then recover through a refresh.

| Stage | What usually happens | What I watch |

|---|---|---|

| Launch | Page gets indexed and starts earning first clicks | Indexing, crawl status, first query set |

| Growth | Rankings improve and traffic builds | New queries, internal links, CTR |

| Peak | Traffic and leads level out at a high point | Competitor changes, freshness signals, conversion rate |

| Decline | Clicks, impressions, or leads start to drift down | Query loss, weaker intent match, lower CTR |

| Refresh | Page is updated, merged, or improved | Recovery in clicks, rankings, and conversions |

Peak is the stage I see overlooked most often. A page is working, so nobody touches it. That is understandable. It is also when decay often starts quietly in the background. Search results change, buyer questions change, and newer pages begin answering the same query with more precision.

For B2B service sites, the refresh stage matters more than many teams expect. A well-timed update to a service page or a bottom-funnel article can steady pipeline without increasing paid spend. On paper that can look like a small win. In practice, it rarely is.

Content Freshness

I treat freshness as intent-dependent, not universal. It matters when searchers expect recent facts, current comparisons, or up-to-date pricing. It matters less when they want a stable definition or a process that changes very little. That is why I prefer reviewing pages through a search intent taxonomy for B2B instead of applying one blanket rule to every URL.

The results page usually makes recency visible. If the top pages show recent years in titles, fresh statistics, comparison tables, or current product details, freshness matters. If the same pages have ranked for years and the query is essentially definitional, clarity usually matters more.

| Queries that usually need freshness | Queries that often need clarity more than freshness |

|---|---|

| AI tool comparisons | What is demand generation |

| Industry statistics | What is a service level agreement |

| Pricing roundups | How lead scoring works |

| Tax or policy changes | What is EBITDA |

| Software feature comparisons | Sales funnel definition |

| “Top tools in 2026” searches | Basic how-to guides with stable logic |

A query like “best CRM for consultants” changes often because feature sets, pricing, and review signals change. A query like “what is pipeline velocity” can stay useful for a long time if the explanation is clear and the examples still hold up.

I also watch for changes in the results page itself. Expanded SERP features, including AI-generated summaries, can reduce clicks even when rankings hold steady. So if impressions are stable but clicks fall, I do not assume decay right away. Sometimes the page is fine and the click pattern changed around it. That is one reason I pay attention to B2B SERP feature strategy when I review falling CTR.

Causes of Content Decay

The cleanest way I know to diagnose content decay is to group causes into content issues, SERP issues, and site issues. That keeps the fix tied to the real problem.

| Group | Common causes | What it looks like |

|---|---|---|

| Content issues | Outdated information, shallow coverage, stale examples, weak title or intro | CTR drops, engagement softens, rankings slip slowly |

| SERP issues | Search changes, user intent shifts, fresher competing pages, new result types | Query mix changes, impressions drop, rank pattern changes |

| Site issues | Content cannibalization, weaker internal linking, orphan pages, crawl or index problems | Multiple pages split impressions, pages lose support, traffic falls unevenly |

When I look at content issues, I am usually seeing a page that is simply less useful than it once was. Old statistics, dated examples, missing subtopics, and weak structure all wear down performance over time. This is common on software comparisons, trend posts, and service pages written in language that no longer matches how buyers think.

SERP issues are subtler. Searchers may want something different now. A query that once favored long educational posts may now favor product-led pages, quick comparisons, or case-study-style content. Or the results page may show more video, more AI answers, or more local elements. The keyword may be the same, but the competitive landscape is not. After broad updates, that shift can happen fast, and a recent analysis of a Google core update is a good reminder of how much rankings can move.

Site issues can be harsh because the page itself may still be good. A redesign can weaken internal links. A new article can start competing with an older one. Category pages and blog posts can end up chasing the same phrase. That is content cannibalization, and if you see that pattern often, it is worth reviewing how to avoid cannibalization on B2B service sites.

One caution matters here: broken tracking is not content decay. If GA4 events disappear or Search Console data looks odd after a migration, I fix measurement first. Otherwise, it is easy to rewrite the wrong page for the wrong reason.

The Impact of Content Decay

I do not think the impact of content decay stops at rankings. Rankings matter, and traffic decline matters, but for B2B service firms the larger hit is often commercial.

A post that once ranked for a high-intent topic can stop sending visitors to service pages. A service page can still attract visits, but from the wrong audience, which means weaker engagement and fewer qualified leads. Then paid channels have to do more work, and acquisition pressure rises.

There is also a broader topic effect. When several pages in the same cluster fade at once, it often becomes harder for new pages on that subject to gain traction. In other words, content decay is not only a page problem. Over time, it can become a site problem. That is one reason I still care about the topic cluster concept for B2B, even on relatively lean sites.

| Month | Organic clicks | Form fills | Sales calls booked |

|---|---|---|---|

| January | 4,800 | 41 | 18 |

| February | 4,620 | 39 | 17 |

| March | 4,180 | 34 | 14 |

| April | 3,620 | 28 | 11 |

| May | 3,050 | 22 | 8 |

The ratio is rarely neat, but the direction usually is. When clicks fall on pages tied to the pipeline, lead flow tends to follow.

How to Fix Content Decay

This is where I see two common mistakes: treating every declining page the same, or doing almost nothing. Some pages need a light refresh. Some need a rewrite. Some should be merged. Some should be redirected. Some should be removed.

| If I see this | The likely move | Why |

|---|---|---|

| Facts are old, but intent is the same | Refresh | The page still matches the query and mainly needs current information |

| Top results now cover more subtopics | Expand | The page lacks the depth now expected in the SERP |

| Two or more pages split the same query set | Consolidate | One stronger page is better than internal competition |

| An old page has been replaced by a better one | Redirect | This preserves equity and removes confusion |

| A page has no traffic, no links, and no business value | Prune | This reduces clutter and keeps the site focused |

For many sites, the fastest wins sit just below the top results. Those pages are close enough to recover with strong edits rather than a full rebuild.

- Update facts and examples. I replace outdated years, product mentions, and evidence that weakens credibility.

- Match current user intent again. I look at the top results and ask whether the SERP now favors guides, comparisons, service pages, templates, or case studies.

- Tighten the page’s search presentation. I rewrite the title, description, opening copy, and key headings so they reflect what searchers want now.

- Add missing subtopics. Query reports, related questions, and competing pages often show where coverage is thin.

- Strengthen internal links. I add support from newer posts, topic hubs, and relevant service pages with clearer anchor text. If this is a recurring weakness on your site, a revenue-first model for internal linking is usually a better fix than ad hoc edits.

- Improve the reading experience. Shorter paragraphs, clearer tables, better information hierarchy, and cleaner page design all make the content easier to use.

- Republish with care. Changing the date alone is not enough. I make real edits, use redirects when pages are merged, and only move to a new URL when the topic has truly changed.

I also separate update, merge, and republish decisions carefully. I update when the topic and intent are still right and the page mainly needs fresher information or clearer coverage. I merge when two pages are competing for the same query set. I republish on the same URL when the subject is still the same but the page needs a substantial rewrite. I only use a new URL when the topic has changed so much that the original page no longer fits. When consolidation is the right move, proper 301 redirects matter.

Pruning deserves a quick note too. Not every page should stay live forever. If a page no longer fits the business, attracts irrelevant traffic, or has no clear strategic use, removing it can help the site stay focused.

Recovery timelines vary. A light refresh can show movement within a couple of weeks, while a heavier rewrite, merge, or redirect often takes longer. Crawl frequency, the scale of the edits, and the competitiveness of the SERP all affect how quickly performance returns, if it returns at all.

Where I Look for Signals

No single report tells the whole story. I usually look across a few data sources, each answering a different question.

| Source | Best used for | What I watch |

|---|---|---|

| Google Search Console | Query loss, impression drops, CTR changes, page-level decline | Date-range comparisons, page filters, query mix |

| Google Analytics 4 | Lead decline, conversion-rate shifts, landing-page performance | Organic conversions, engagement, assisted conversions |

| Rank-tracking tools | Visibility trends and keyword movement | Position changes, keyword spread, overlap between pages |

| Site crawlers | Crawl issues, internal-link weakness, orphan pages | Status codes, canonicals, indexability, internal links |

| Content gap tools | Missing subtopics and shallow coverage | Topic coverage, relevance gaps, missing supporting sections |

Search Console is usually my first stop because it shows how Google Search is treating the page. GA4 tells me whether business value is falling too. Rank-tracking tools help me spot broader visibility shifts and possible cannibalization. Crawlers are especially helpful after redesigns or migrations, when site-level issues are more likely than content issues.

I keep one caution in mind: third-party visibility tools are useful, but they are still estimates. If Search Console and a third-party tracker disagree, I trust Search Console first for search performance.

Regular Maintenance

I find content decay easier to prevent than to rescue. Once a page has been slipping for six months, recovery can take time. A light monthly review often catches losses before they spread.

| Cadence | What I do | What I watch |

|---|---|---|

| Monthly | Review top landing pages in Search Console and GA4 | Clicks, impressions, CTR, conversions |

| Quarterly | Audit older pages and topic clusters | Intent match, cannibalization, internal links, outdated information |

| Trigger-based | Review pages after major site or service changes | Indexing, redirects, template shifts, conversion changes |

Common refresh triggers are fairly consistent: a 15 to 20 percent click drop across two straight months, a conversion-rate drop on a high-intent page, a major service or pricing change, a new site template or migration, or a visible SERP shift for a valuable query.

I have found that ownership matters more than elaborate reporting. One person should own the review list, someone should handle updates, SEO should review query data and internal linking, and sales should feed in the buyer questions that keep coming up. For lean B2B teams, that kind of simple handoff prevents a lot of wasted motion.

That is really the point. I do not need more dashboards for the sake of it. I need fewer surprises, steadier lead flow, and a site that keeps earning trust instead of quietly losing ground.

.svg)