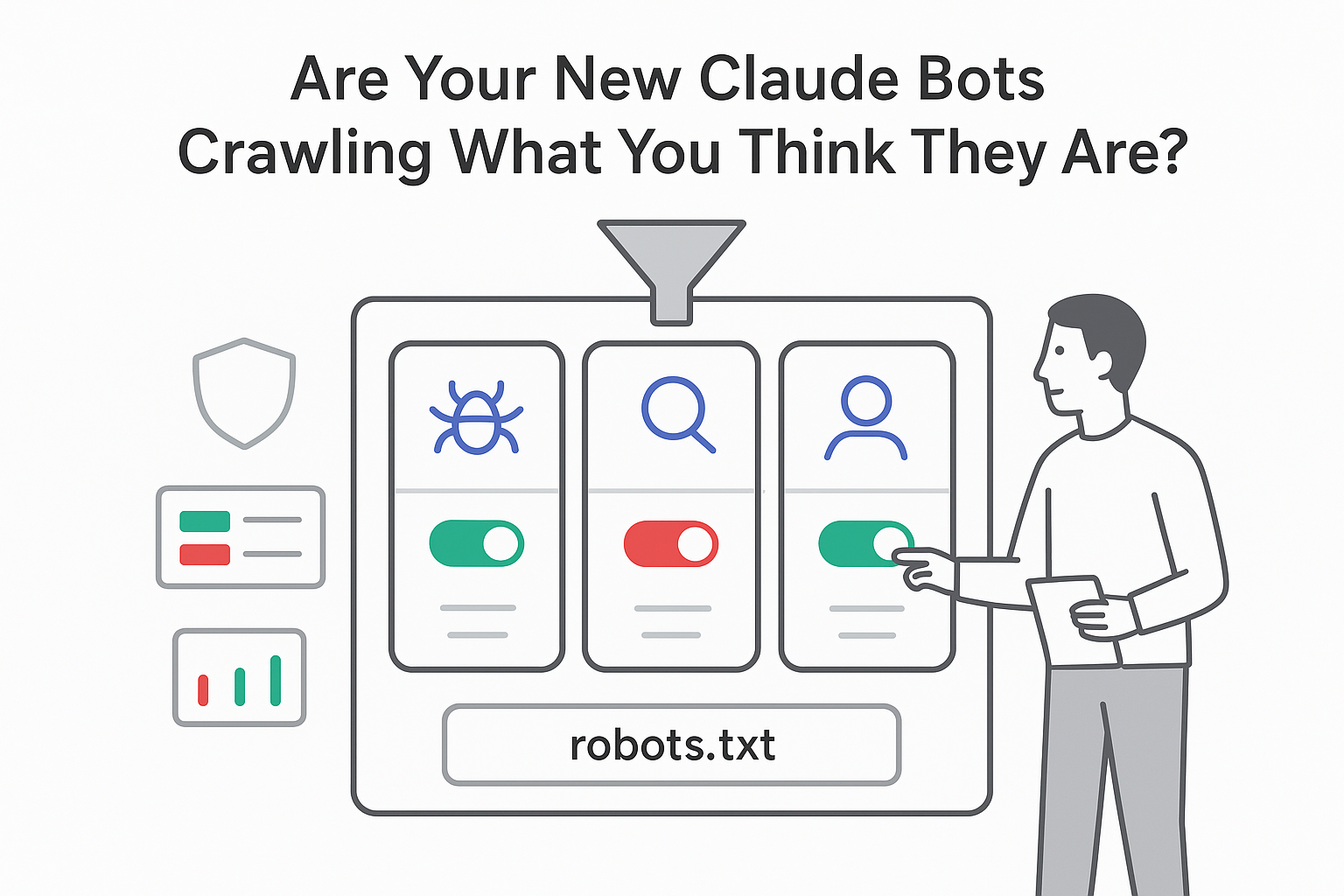

Anthropic has quietly restructured how its Claude crawlers appear to site owners, spelling out separate bots for training, search indexing, and user-triggered fetching, and clarifying that all three honor robots.txt.

Key Details

This week Anthropic updated its crawler documentation on its privacy site to list three distinct Claude bots, each with its own user-agent string and role:

- ClaudeBot - collects public web content as potential training data for Anthropic models.

- Claude-SearchBot - indexes content to support Claude's search and answer features.

- Claude-User - fetches individual pages when users request web browsing or ask questions that trigger live retrieval.

On the crawler page, Anthropic says all three bots follow robots.txt directives, including rules that specifically target Claude-User.

The documentation explains the impact of blocking each bot. For Claude-SearchBot, it notes that blocking the bot prevents indexing for search and optimization and

"may reduce your site's visibility and accuracy in user search results."

For Claude-User, the page uses similar language about potential reductions in visibility if robots.txt blocks the bot. Anthropic also notes that blocking ClaudeBot does not automatically block Claude-SearchBot or Claude-User, so site owners can choose different policies for training, search, and user-initiated fetching.

Anthropic's approach closely parallels OpenAI's three-bot structure, which separates GPTBot, OAI-SearchBot, and ChatGPT-User. Perplexity AI uses a two-bot model with PerplexityBot for indexing and Perplexity-User for user-directed retrieval.

A key difference lies in how user-initiated browsing is treated. Anthropic states that Claude-User follows robots.txt rules the same way as its automated crawlers. By contrast, OpenAI's documentation indicates that robots.txt rules may not apply to ChatGPT-User in the same way, and Perplexity says robots.txt generally does not govern Perplexity-User.

For marketers and publishers, this creates a more granular set of choices: sites can allow training while limiting search visibility, allow answers and search while blocking training, or block any combination of the three functions.

Background Context

The previous version of Anthropic's crawler page referenced only ClaudeBot and described data collection for model development in broad terms. Before ClaudeBot, Anthropic used the Claude-Web and Anthropic-AI user agents, which are now deprecated. Reporting by The Register in July 2024 highlighted those earlier user agents and publisher concerns.

OpenAI introduced a similar separation in late 2024 when it distinguished GPTBot from OAI-SearchBot and ChatGPT-User. In a December documentation update, OpenAI wrote that GPTBot and OAI-SearchBot share information to reduce duplicate crawling. As noted in that December update, this sharing is designed to limit redundant hits on publisher sites, while ChatGPT-User handles user-initiated browsing and may not follow robots.txt in the same way.

In its messaging to site owners, OpenAI is more direct in warning that blocking OAI-SearchBot will remove a site from ChatGPT search answers. Anthropic's wording focuses on the potential for reduced visibility and accuracy in Claude's search results if Claude-SearchBot or Claude-User are blocked.

Publisher Response and Traffic Impact

Recent measurement studies suggest many publishers are already taking a selective approach to AI crawlers:

- A BuzzStream study of leading news sites found that 79% block at least one AI training crawler.

- The same research reported that 71% block at least one retrieval or search crawler.

Together, these figures indicate that major publishers often block both AI training bots and search or retrieval bots, rather than allowing broad access.

Separate analysis by Hostinger, covering 66.7 billion bot requests, tracked shifting coverage for OpenAI's crawlers. In that sample, coverage for OpenAI's search crawler grew from 4.7% of sites to more than 55%, while coverage for its training crawler dropped from 84% to 12% over the same period.

Cloudflare's Year in Review report identified AI crawlers as a measurable share of overall web traffic but noted that referral traffic from AI platforms still trails raw crawler request volume. Google has already introduced the Google-Extended user agent, which lets sites exclude content from Gemini training while remaining in Google Search. Anthropic and OpenAI now document a similar separation between training and search crawlers on their own platforms.

Source Citations

Key facts in this article come from official documentation and technical reports, including:

- Anthropic crawler documentation describing ClaudeBot, Claude-User, Claude-SearchBot, and their robots.txt handling.

- OpenAI bot documentation describing GPTBot, OAI-SearchBot, ChatGPT-User behavior, and robots.txt guidance.

- BuzzStream research on AI crawler blocking patterns among major news publishers.

- Hostinger measurement report on OpenAI crawler coverage across 66.7 billion bot requests.

- Cloudflare Year in Review reporting on crawler and referral traffic patterns.

- Coverage and synthesis by Search Engine Journal and The Register on AI crawlers and publisher responses.

.svg)