How Google visual search fan-out could reprice multi-product demand

Google's new Gemini-powered visual search update turns a single image query into many parallel searches. That shift is likely to change how commercial intent is expressed, surfaced, and monetized across Search and Shopping surfaces.

This analysis examines Google's March 2026 update to Lens, Circle to Search, and AI Mode, where one image can now trigger many object-level searches in parallel. The core question: how this change alters search behavior and demand capture for product, local, and informational queries, and which adaptations marketing leaders should prioritize in SEO, paid search, and creative strategy.

Key takeaways

- Multi-object visual search creates more impressions per query. One "scene" (outfit, room, shelf, menu) can now spawn several product and information lookups, so a smaller number of visual queries can yield more results pages and ad-eligible slots for brands.

- Scene-based imagery becomes a demand surface. Lifestyle photos, UGC, and social content that show full outfits or rooms matter more than isolated packshots, because Google can dissect each scene and run separate searches for each item.

- Data structure and asset quality decide who shows up. Brands with clean product feeds, clear object-to-image mapping, descriptive alt text, and distinctive imagery are better positioned to be matched when AI Mode "fans out" sub-queries.

- Measurement and attribution will get messier. One screenshot search can lead to several downstream clicks, some organic and some paid. Marketers should expect more cross-surface journeys that start from images rather than typed keywords.

- Short term, large retail catalogs gain first. Over time, smaller brands that invest in rich, context-heavy imagery and structured data can surface disproportionately often in multi-object scenes.

Situation snapshot

Google published a "Techspert" explainer describing a major update to visual search and AI Mode, powered by Gemini models and building on Lens and Circle to Search on Android. [S1]

Undisputed facts from the article and linked materials:

- AI Mode can now break down a complex image (for example, an outfit, room, or garden) into multiple elements and issue several searches in parallel, a process Google calls "fan-out." [S1]

- The system uses Gemini as an orchestration layer: the model "sees" the image and the user's prompt, decides which tools to call (for example, Lens), triggers multiple searches (for each item), and composes a single, combined answer with links. [S1]

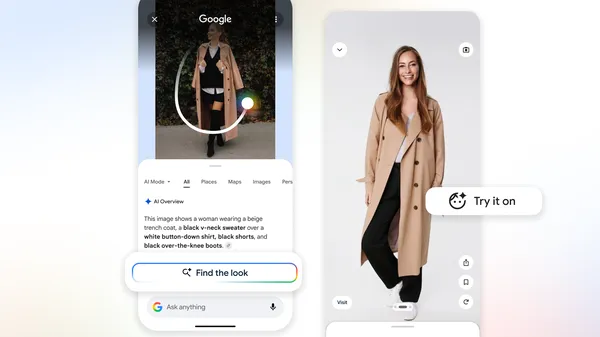

- Multi-object scenarios highlighted include full outfits, interior design scenes, gardens (plant care), museum walls (art explanations), and bakery windows (pastry identification). [S1]

- Users can start either from an image (Circle to Search, Lens) or from text in AI Mode, then pivot into visual refinement (for example, "show me more like the second skirt"). [S1]

- Google positions this as an evolution of multimodal search and of AI Mode / AI Overviews, not as a separate product. [S1][S2]

Google does not mention ads or monetization in this post; the focus is user assistance and richer answers.

Breakdown and mechanics: how AI Mode "fan-out" visual search works

At a high level, the process described by Google can be modeled as: user submits image (plus optional text) → Gemini interprets scene and intent → Gemini segments the scene into relevant objects and sub-questions → each sub-question triggers a search (Shopping, Images, Web) → the system reads results → Gemini writes a combined answer with links and visuals.

Key mechanics and incentives:

- Scene understanding. Gemini uses its multimodal ability (vision plus language) to both locate objects in an image and infer why the user cares. For a living room image, it detects individual items (lamp, rug, chair) and infers the style (for example, mid-century modern) to bias retrieval. [S1][S2]

- Parallel search ("fan-out"). Instead of the user running five separate searches, AI Mode spins these off at once. Technically, that means more independent queries against Google's index and verticals for every qualifying visual search. [S1]

- Retrieval "library." The article frames Lens and the visual backend as a "library" that stores billions of web results. Gemini's job is choosing what to look up and how to summarize; the search backend's job is fast, precise matching to product pages, images, and informational content. [S1]

- Aggregation and ranking. For a fashion outfit, Gemini might ask the backend for "visually similar hat" or "visually similar jacket," then rank candidates by relevance, quality, and likely commercial signals such as price, availability, and authority of the host site, similar to how Shopping and Image Search currently work. [S3]

- User interaction loop. Users can refine within AI Mode (for example, "more like this skirt"), which likely feeds fresh engagement data back into visual matching systems: what people click, what they ignore, and which style labels correlate with dwell time and conversions.

Incentive-wise, Google benefits from:

- higher user satisfaction on mobile, where typing is slower

- more opportunities to show commercial results per session

- richer behavior data from visually driven exploration paths

Impact assessment

Paid Search and Shopping

Direction and scale.

- More product-matching events per query. When one screenshot produces several fashion or home décor searches, the number of eligible product slots per user session rises. Even if only a fraction of these fan-out sub-queries get ads, the inventory potential is higher than from a single keyword lookup.

- Shift toward visual and contextual signals. Product feeds that include multiple high-quality, context-rich images (for example, full outfit shots, staged rooms) become more valuable. Ads and free listings attached to those assets are more likely to surface when AI Mode seeks visually similar items.

- Price and assortment competition at the scene level. For a user recreating an entire look, Google can suggest complete sets such as hat, jacket, and shoes. This could increase multi-item baskets but also intensify competition on mid-price anchor items that define the look.

Who benefits and who loses.

- Beneficiaries:

- large retailers and marketplaces with deep catalogs, structured feeds, and varied product imagery

- brands already strong in Shopping ads and Merchant Center, since their SKUs are well indexed visually

- Potential losers:

- advertisers relying mainly on text-only search campaigns without a strong presence in Shopping or image-based formats

- niche brands using generic studio shots that are visually indistinguishable from many others, making visual recognition less precise

Actions and watchpoints.

- Audit product feeds for image richness and object clarity. Multiple angles, lifestyle shots, and distinct backgrounds can aid matching.

- Monitor Shopping and image-driven campaign performance on Android and visual-influenced traffic, watching for shifts in query types and impression volume tied to style- and scene-based lookups.

- Plan for campaigns that complement full-look or full-room queries, such as "complete the look" strategies and bundles.

Organic Search and SEO

Direction and scale.

- Traffic composition changes. Some informational and commercial journeys will begin with an image instead of a keyword. That can reduce raw text query volume for "what is this plant" or "mid century modern lamp," but increase visibility for pages with strong images and schema that AI Mode can cite.

- Higher value for image SEO. Filenames, alt text, surrounding copy, and structured data that clearly associate each image with a product, style, or concept will matter more, since Gemini uses both visual and text cues to interpret and fetch results.

- Greater role for scene-rich content. Guides, galleries, and UGC that show full spaces or outfits can feed many object-level matches. One blog post featuring a styled living room might generate visibility for sofas, rugs, lamps, and side tables separately.

Who benefits and who loses.

- Beneficiaries:

- sites with strong image metadata and structured data (Product, Article, ImageObject schema)

- brands investing in original photography that clearly shows their products in context

- Potential losers:

- thin affiliate pages that reuse stock photos and lack clear object-attribute markup

- sites heavily dependent on long-tail text queries such as "what is the plant in this photo" that may be partly absorbed into visual AI answers

Actions and watchpoints.

- Treat every lifestyle image as a possible query source: ensure products in that image are linkable and marked up, and that copy explicitly mentions items present in the scene.

- Monitor image search impressions, AI Mode citations, and any Lens or other visual surfaces that appear in Search Console as they roll out.

- Re-balance content calendars to include more scene-based visual content tied to clear product and informational entities.

Creative, Social, and Merchandising

Direction and scale.

- Full-scene composition matters more. Because Google can parse multiple items in one shot, creative that shows cohesive outfits, tablescapes, or rooms can generate more entry points than single-product close-ups.

- Social content as search entry. Users already screenshot social posts to search for products. The better your brand visuals stand out and embed identifiable objects, the more likely those objects are matched in visual search sessions.

Actions and watchpoints.

- Encourage creative teams to design photography that supports object segmentation, with clear edges, limited clutter, and distinctive styling for each item.

- Coordinate social and site assets. If an outfit appears on social platforms, ensure the same look (or a close variant) exists on your site with tagged products, so visual matches lead to a coherent landing experience.

Analytics and Operations

Direction and scale.

- Attribution spread across more steps. One Circle to Search action might yield several product page visits plus a later brand search. This can blur channel boundaries (Organic vs Paid vs Social referral) and complicate ROAS and CPA measurement.

- Less predictable query reports. AI Mode fan-out sub-queries may not be exposed as traditional keywords, making it harder to map spend and traffic back to user language.

Actions and watchpoints.

- Track landing pages likely to be recipients of visual traffic, such as outfit pages, lookbooks, and galleries, and monitor their device mix and entrance paths.

- Prepare to lean more on aggregate metrics (for example, revenue per session from Android search) rather than pure keyword reporting, especially if AI Mode abstracts query strings.

Scenarios and probabilities

These scenarios are model-based and explicitly speculative, using current AI Overviews and visual search rollouts as precedent. [S3][S4]

-

Base case - gradual visual-first adoption (Likely).

- Share of mobile shopping and inspiration sessions that start with images rises steadily but does not replace text.

- Multi-object fan-out becomes common for fashion, home, and hobby niches.

- Ads and organic placements slowly integrate into AI Mode visual answers in more regions and languages.

- Impact: 5 to 15% of commercial discovery in certain verticals originates from visual-first paths within 2 to 3 years, with higher share on Android.

-

Upside case - visual search becomes mainstream for style and décor (Possible).

- Circle to Search and Lens see much higher usage, driven by OEM integrations and user education.

- For fashion and home, starting from a screenshot becomes as common as typing a product name.

- Google introduces richer ad formats specifically tied to scene decomposition, such as sponsored "complete this look" units.

- Impact: brands with strong visual assets and Merchant Center setups win disproportionate share; CPCs on visually driven placements rise due to higher purchase intent.

-

Downside case - limited behavioral change but more zero-click answers (Edge).

- Users try multi-object visual search but default back to text for many tasks; fan-out is used mainly for niche questions.

- AI Mode increasingly answers "what is this?" and "explain this scene" without many clicks, especially for educational queries.

- Impact: some informational traffic erodes, but commercial impact is modest; visual AI behaves mostly as an assistant layer rather than a major commerce driver.

Risks, unknowns, limitations

- Ad integration is not described. The article and AI Mode PDF do not state how Shopping ads or sponsored results appear within multi-object visual answers. Any expectations about monetization or CPC impact are speculative. [S1][S2]

- Usage and adoption data are missing. There are no fresh numbers on how many searches involve Lens or AI Mode, or how that splits by region, device, and category. Past statements only confirm "billions of Lens queries per month," not detailed breakdowns. [S4]

- Control for marketers is limited. There is no direct interface yet for specifying how assets are used within fan-out visual answers, beyond standard product feeds and SEO. That may change, but timing is unclear.

- Attribution and reporting for AI Mode are not transparent. Current Search Console and Google Ads reporting around AI Overviews are still evolving; similar lag is likely for multi-object visual search.

- This analysis relies on pre-2025 public information plus the March 2026 article. Any internal experiments or upcoming policy changes inside Google that are not documented publicly could alter the trajectory.

Evidence that would challenge this analysis:

- Google explicitly stating that multi-object AI Mode will not show any commercial results or ads.

- Data showing that visual search usage remains niche even after two or more years of wide Android integration.

- Strong evidence that users reject multi-object visuals for shopping and stick predominantly to single-object or text behavior.

Validation: This piece states a clear thesis, explains mechanics and cause-effect, contrasts official framing with market implications, and provides channel-specific impacts, scenarios, and risks with assumptions labeled where speculative.

Sources

- [S1] Google / Molly McHugh-Johnson, 2026, blog post - "Ask a Techspert: How does AI understand my visual searches?"

- [S2] Google, 2025, product PDF - "About AI Overviews and AI Mode in Search" (linked from [S1]).

- [S3] Google, 2023, blog post - "Supercharging Search with generative AI" (introduction of Search Generative Experience / AI Overviews).

- [S4] Google, 2022–2023, public talks and posts - statements that Google Lens handles billions of queries per month, indicating substantial visual search usage prior to the 2026 update.

.svg)