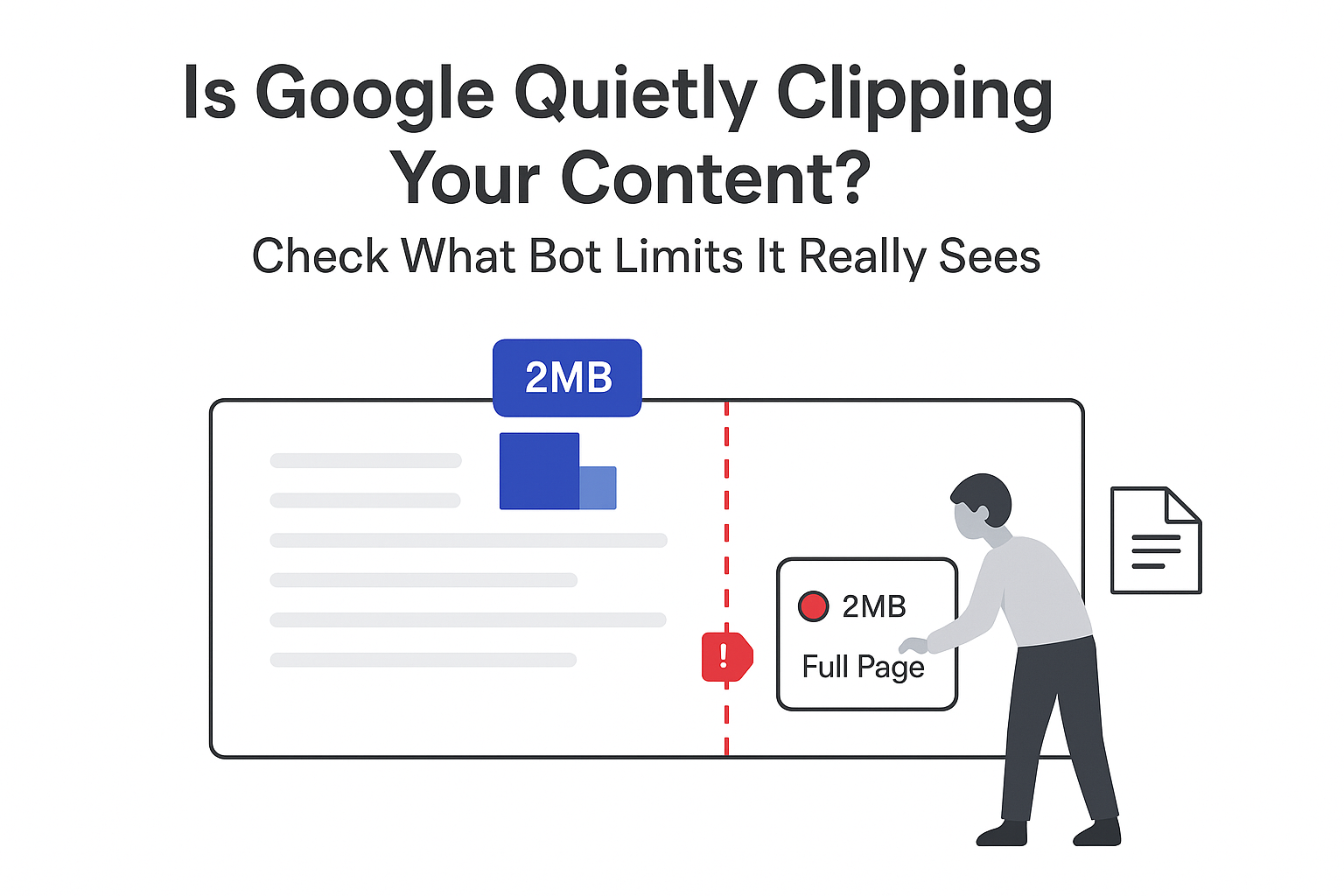

In a recent episode of Google's Search Off The Record podcast, Gary Illyes and Martin Splitt shared new details about Googlebot crawl limits. They outlined current file size thresholds, internal configuration options, and how those limits protect Google's infrastructure. Their comments provide additional factual detail beyond what appears in Google's public crawling documentation.

Googlebot crawl limits

Illyes said that any Google crawler starts with a default 15 megabyte content limit set at the infrastructure level. During fetching, an internal counter tracks the bytes received and stops accepting data once 15 megabytes have been downloaded. At that point, the system signals the server that no more data is needed.

He added that individual product teams can override this default limit for their own crawlers. For Google Search, Illyes confirmed that the team currently configures Googlebot with a 2 megabyte limit, overriding the 15 megabyte default. Internal teams regularly adjust these settings when specific tasks require different behavior.

Crawling infrastructure configuration and protection

Illyes explained that crawl limits are designed in part to protect Google's internal systems from heavy processing loads. Very large documents increase conversion and processing overhead during indexing, raising the risk that a single resource could place disproportionate strain on infrastructure.

He noted that crawling cost is not limited to network bandwidth or avoiding stress on external servers. Internal processing capacity and infrastructure safety also shape how Google sets file size and truncation rules across its crawlers, with project teams tuning values as needed.

As an example, Illyes said that the default 15 megabyte limit is raised for some PDFs. In certain cases, he mentioned a truncation threshold of roughly 64 megabytes, connected to the size of some HTTP standard documents that are exported as PDF.

For HTML, Illyes said Google avoids fetching extremely large single-page documents, such as a 14 megabyte HTML standard page. Instead, Googlebot requests smaller documentation pages focused on specific features. According to Illyes, this approach surfaces more useful content while keeping processing costs manageable.

Splitt described Google's crawling setup as a shared service used by web search and other internal clients. He said this service supports extensive configuration options instead of one fixed Googlebot behavior, and that settings such as fetch limits can be adjusted at the request level when necessary.

Background context and official documentation

Google publicly documents Googlebot behavior on its Search Central site. The current Googlebot documentation states that Googlebot generally processes only the first 15 megabytes of any fetched resource, and that content beyond that point is not considered for indexing.

On the podcast, Illyes noted that other Google crawlers use different default and override settings. Although he has not worked directly on those crawlers, he expects configurations to vary across projects and content types. Splitt similarly indicated that settings may differ for formats such as images, PDFs, and HTML pages.

Source citations

- Google Search Central - Googlebot documentation

- Google Search Central - Search Off The Record podcast segment on crawling infrastructure and limits

.svg)