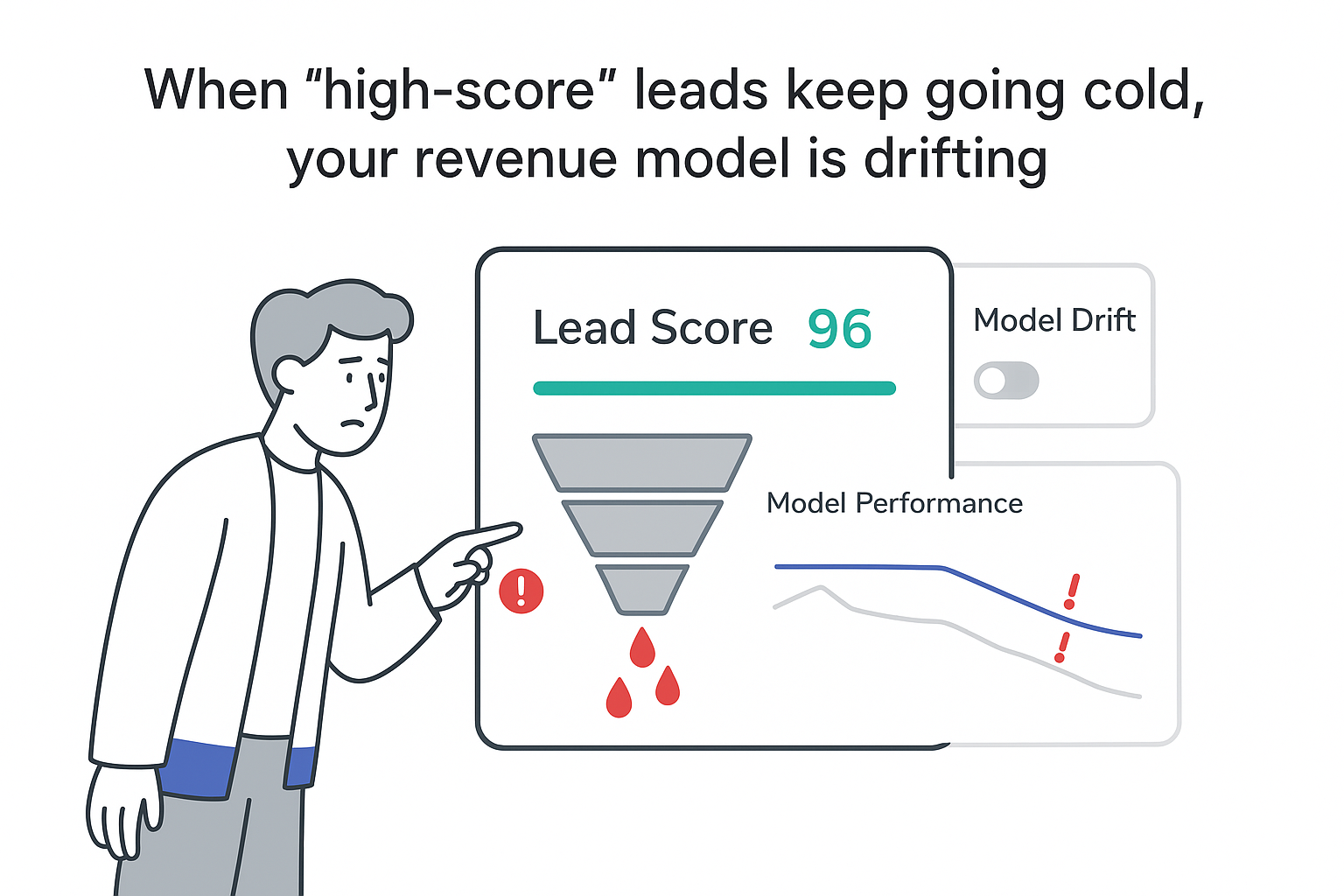

Machine learning models rarely fail in a loud, obvious way. In my experience, they decay slowly. Lead scores look slightly “off,” forecasts feel less trustworthy, support queues get messy. The dashboards still update, but something in the business no longer matches what the model seems to believe.

That slow drift is often not a people problem or a sales problem. It’s a model problem called concept drift. When I’m running a B2B service company that leans on data for lead scoring, churn prediction, or revenue forecasting, concept drift quietly influences how expensive the pipeline becomes-and how confident the team feels making decisions. Researchers have also documented this broader pattern of model degradation over time.

Why I Care About Concept Drift

As a CEO or founder, I usually only “meet” machine learning in slide decks or monthly reports. Even so, models often sit inside core go-to-market workflows:

- A lead scoring model that tells SDRs who to call first

- A revenue forecasting model that shapes hiring and cash decisions

- A fraud or abuse detection model that blocks bad signups or free trials

- A support routing model that pushes tickets to the right team

All of these models assume that the world today looks enough like the world they saw during training. When that assumption stops being true, concept drift shows up-and the business impact is real.

For example, if my ICP shifts toward larger accounts but the model still prefers the patterns of smaller startups, SDR time gets misallocated and CAC rises while close rates slide. If my forecasting model learned from a period of steady growth and I later change pricing or packaging, it may keep predicting smooth lines and push planning decisions in the wrong direction. If I adjust trial rules or expand into a new region, abuse patterns can change and an old model may miss what it used to catch. And when I launch a new product module, support ticket topics shift; routing that used to work can start sending complex issues to the wrong queues, increasing response times for high-value customers.

From a dashboard perspective, nothing dramatic happens at first. Performance metrics slip a little each week. Business KPIs wobble. By the time the problem is unmistakable, I may already have spent a quarter acting on low-quality signals.

Some early warning signs that often make me suspect concept drift are:

- Conversion rates drop for segments that used to be steady

- A model still reports “good” overall accuracy, but specific industries, regions, or deal sizes suddenly perform worse

- The share of leads in high-score bands grows or shrinks quickly without a matching change in revenue

- Input features show strange patterns (for example, a CRM field that used to have clear peaks becomes almost flat)

- Manual overrides increase (for example, sales ignores scores or support re-routes tickets by hand)

Concept drift is that slow bend in the line: not a crash, but a steady move away from the results I originally trusted. If you’re using predictive scoring in acquisition, this connects directly to how you manage predictive lead value bidding and prioritization downstream.

What Concept Drift Is (and What It Isn’t)

Under the hood, a predictive model learns a relationship between input features x and a target y. In shorthand, it learns P(y|x), which I read as: “given this data x, how likely is outcome y?”

Concept drift happens when that relationship changes in the real world, while the model stays frozen in its old view. The model isn’t “broken” as software; it’s accurately describing an earlier version of my market, my process, or my customers.

I also separate concept drift from two related issues that can look similar:

Data drift: the distribution of the inputs changes (P(x)). A CRM field starts using new values after a process change, or a new channel begins sending a different mix of leads.

Label drift: the distribution of the target changes (P(y)). I redefine what counts as “qualified,” or finance changes what counts as churn.

Concept drift is about the mapping itself. Even if the same kinds of leads arrive and I keep the same definition of success, the probability that those leads buy-or churn-can change.

A simple analogy: if I think of a city map loaded into GPS, data drift is like new roads being built, label drift is like renaming districts, and concept drift is when the typical routes and “what works” change. The map still draws lines, but the guidance no longer matches how people actually move.

A compact comparison keeps the terms straight:

| Type | What changes | Simple example | Typical risk |

|---|---|---|---|

| Data drift | Input data distribution P(x) |

New lead source with different firmographics | Model sees unfamiliar patterns |

| Label drift | Target distribution P(y) |

New definition of “qualified” opportunity | Metrics look worse or better without real change |

| Concept drift | Relationship P(y|x) |

Same lead profile now converts at a different rate | Model decisions become wrong in the real world |

In practice, I often see these show up together-but concept drift is what directly breaks decision quality.

Different Types of Concept Drift

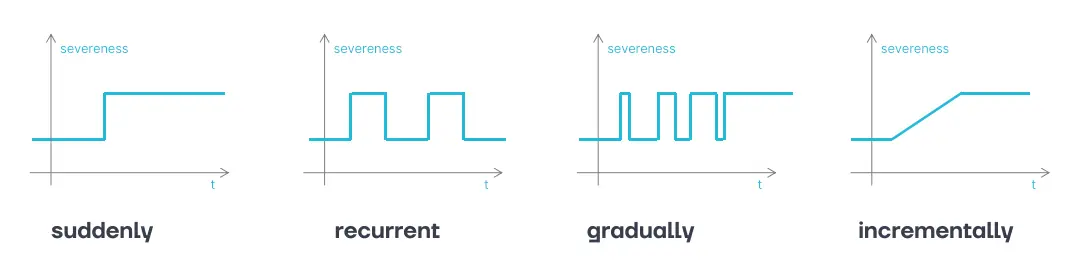

Concept drift doesn’t always show up in the same pattern. The pattern matters because it changes how I respond.

Sudden drift is a sharp change that sticks. A pricing model can break overnight if I move from license-based to usage-based pricing. Behavior can also shift quickly after policy changes, like tightening trial rules.

Gradual drift is a transition period where old and new patterns coexist. If I’m moving upmarket, smaller deals may still arrive, but the growing enterprise mix behaves differently. The same can happen when I roll out a new qualification framework and reps adopt it unevenly.

Incremental drift is many tiny shifts that accumulate until the model is clearly behind reality. Market saturation, subtle changes in buyer maturity, or slow shifts in channel mix can all create this pattern.

Recurring (seasonal) drift repeats on a calendar cycle. Budget timing, renewal clustering, or industry-specific seasons can change conversion and support patterns in predictable ways.

Blips are short-lived distortions that shouldn’t permanently reshape the model-like a one-off campaign that pulls in an unusual segment or a brief incident that temporarily alters behavior.

When I’m deciding what to do, I try to match the response to the pattern. Seasonal changes usually call for season-aware features and schedules; sudden step-changes often justify a more aggressive refresh of training data. Slow one-way shifts generally benefit from weighting recent data more heavily. And when I suspect a blip, I avoid overreacting and baking temporary noise into the model.

Effect of Concept Drift

When concept drift creeps in, the visible symptom is worse model performance. The real cost shows up downstream-in time, money, and trust.

Lead scoring waste: If a model used to identify “high intent” leads with 70% precision and later drops to 40%, the team doesn’t just lose accuracy-it loses hours. If each rep spends two hours per day on high-scored leads, almost an hour per rep per day can be spent on poor opportunities. Across a ten-person team, that’s roughly a full workweek lost every week.

Misleading revenue forecasts: A forecasting model trained before a major pricing or product change can keep projecting the old shape of growth. If actuals land 20% below forecast for multiple quarters, planning starts to feel unstable-not because the team forgot how to execute, but because the underlying signal is wrong.

Skewed decisions by segment: Drift can quietly “flatten” emerging opportunities. If the model underestimates win rates for a new industry segment (often because it has limited history), leadership may steer away from it. The organization then misses the chance to learn fast in a segment that could be profitable. This is also where tighter feedback loops, like AI-based win-loss analysis, can help you see whether the market changed or the model did.

Automation failures: Routing and triage models fail in ways that look like process problems. If tickets about a new feature get misclassified, priority customers sit in generic queues. The team responds by bypassing automation and assigning manually, which removes the operational benefit the model was meant to provide. (Related: AI triage of inbound inquiries only works if the routing logic stays aligned with reality.)

Governance and audit pressure: In regulated or audit-sensitive environments, it’s not enough for a model to work “most of the time.” If model behavior drifts without visibility, I’m left with weaker explanations for why decisions looked the way they did across time. If you operate in or sell into markets affected by the European AI Act, this “prove it over time” requirement becomes more than a best practice.

The softer effect-often the most expensive-is loss of trust. Once leaders and frontline teams feel scores and forecasts are unreliable, they fall back to spreadsheets, gut calls, and shadow systems. Manual overrides rise, internal debate grows, and technical teams spend more time defending outputs than improving them. Concept drift doesn’t just hurt accuracy; it adds operational friction.

Where Concept Drift Comes From

In B2B service businesses, concept drift usually comes from change-the kind of change I actually want. Growth, better processes, new products, and channel experimentation all move the target.

I typically see drift emerge from a few sources. Markets shift as competitors enter, customers expand into new geographies, or macro conditions change. Products evolve through new modules, onboarding changes, or packaging and pricing updates. Channel and campaign mix changes who enters the funnel and what “normal” looks like. Data instrumentation shifts-CRM field changes, tracking updates, form changes-can alter inputs and sometimes outcomes. And internal policy and process changes (sales stage definitions, qualification rules, churn definitions) can move the goalposts even when customer behavior stays steady.

Practically, whenever I make changes like pricing updates, a new product line, major channel shifts, territory reshuffles, or changes to definitions like “qualified” or “churned,” I assume my models are now looking at a new world with old eyes unless I prove otherwise.

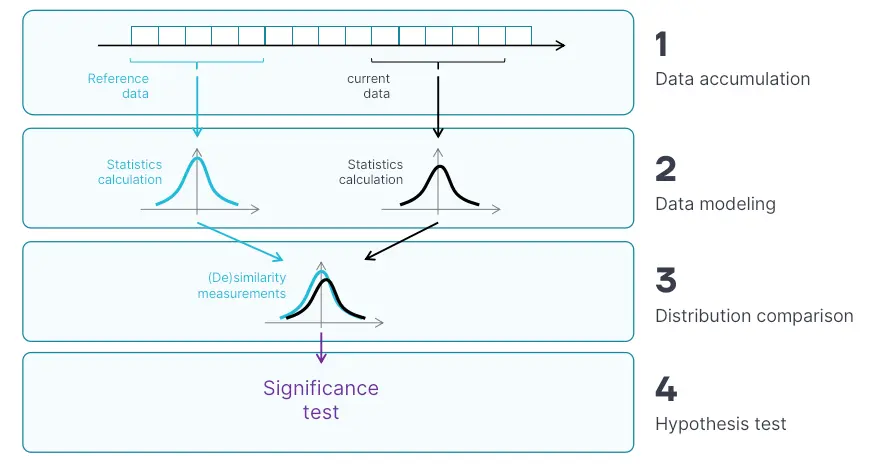

How I Detect Concept Drift

Detecting concept drift sounds technical, but I find the workflow can be very practical if I anchor it to the business.

1) Define a baseline. I pick a stable historical period and track two sets of metrics: model metrics (accuracy, AUC, precision/recall, calibration) and business metrics (conversion rate, pipeline created, revenue per lead, time-to-resolution). The baseline matters because “normal” often isn’t a single number.

2) Log predictions in a way I can audit later. For every prediction, I store a timestamp, the input features used, the prediction output, and a stable identifier that can be joined to the eventual outcome (for example, a lead ID that later links to closed-won/lost).

3) Watch feature and prediction distributions. Even before labels arrive, I monitor whether key inputs and scores are behaving differently. If 80% of leads suddenly score above 0.9, I treat that as a signal worth investigating-not a victory.

4) Recompute performance when labels arrive (and slice it). As outcomes come in, I compute performance on rolling windows and I break it down by segment: industry, region, deal size, channel. Many drift problems hide in segments while the global metric looks fine.

5) Set clear thresholds and route the signal to owners. I decide what changes are meaningful enough to investigate (for example, a sustained drop in a segment’s win rate, or a persistent shift in a key feature). I also make sure the alert reaches both technical and business owners; otherwise it becomes noise.

6) Triage before I retrain. When a signal fires, I first check for data issues (missing fields, pipeline breaks, tracking changes). If the data is clean, I treat it as real drift and decide whether the right response is retraining, feature changes, or temporarily simplifying the decision logic until the model is updated. In practice, a lot of “drift” investigations end up being data hygiene problems, which is why I keep a close eye on CRM hygiene for marketing performance.

How often I check depends on how fast the underlying process moves. Lead scoring and abuse signals can warrant frequent checks; forecasting and churn often move slower but still benefit from regular review, especially after business changes.

Concept Drift Detector Approaches

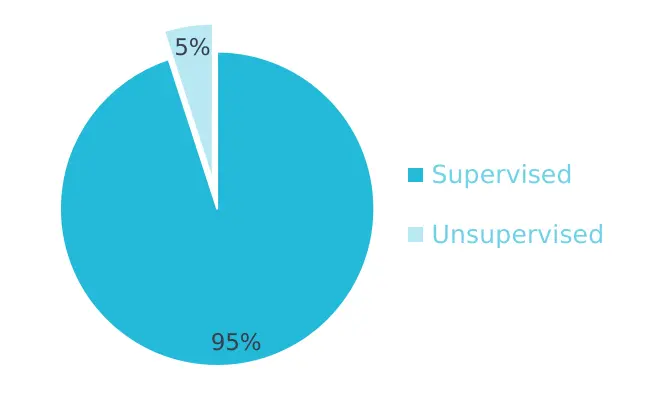

When I talk about “drift detection,” I usually mean one of three families. They differ mainly by whether they require labels, what they monitor, and how quickly they can react. For a deeper taxonomy and survey-style overview, I often point people to Gemaque et al.

| Method family | Needs labels? | Watches mainly | Strengths | Limitations |

|---|---|---|---|---|

| Supervised | Yes | Prediction errors | Tied directly to business outcomes | Requires timely, stable labels |

| Unsupervised | No | Input or prediction changes | Works even when labels come in slowly | May flag changes that don’t hurt results |

| Hybrid | Sometimes | Mix of both | Balances coverage and relevance | More complex to design and maintain |

Supervised Methods

Supervised approaches treat drift as “the model’s errors changed.” I monitor standard metrics over time (including by segment), because segment-specific failure is often what hurts the business first. In more streaming-like settings, I can track error rates as a sequence and look for statistically meaningful shifts; related techniques also monitor whether a loss value (like log loss) changes enough to suggest the model is no longer aligned with reality.

These approaches work best when outcomes arrive on a predictable timeline and label definitions stay stable. They’re less useful when deal cycles are long, outcomes are ambiguous, or internal definitions change frequently.

Unsupervised Methods

Unsupervised approaches look for shifts in inputs or outputs without waiting for labels. I can compare distributions of numeric and categorical features across time windows, monitor representation drift in higher-dimensional settings, watch reconstruction error for models that learn “normal,” or track whether predicted probabilities change shape over time. I can also apply change-point detection to find structural breaks in feature or score time series.

These approaches are valuable when labels arrive slowly or incompletely, because they provide early warnings. The trade-off is that not every distribution change matters. Seasonality can look like drift, high-dimensional data will always contain some movement, and a signal still needs human interpretation to connect it to business impact.

Operational Setup for Drift Monitoring

I don’t need a complex program to start; I need a system that makes model aging visible. For me, the essentials are:

- A consistent record of each prediction (inputs, timestamp, output, identifier)

- A reliable way to attach outcomes later (even if delayed)

- A view of performance and drift over time, including segment slices

- A clear ownership path so signals lead to decisions, not debates

I focus less on “perfect detection” and more on repeatable learning: when something changes in the business, how quickly do I see it in model behavior, and how confidently can I separate real drift from data breakage?

If you want an approachable starting point, a tool like Evidently can help you operationalize reporting for data quality, drift, and performance trends without building everything from scratch.

Open Challenges

Even with solid monitoring, concept drift management has real traps.

In B2B, labels are often delayed and incomplete: deals can take months, and some end without clean closure. Feedback loops also matter-the model changes behavior by steering attention, which can bias what outcomes I observe and later train on. Label definitions can shift because operations evolves, and that can look like drift even when customer behavior is stable. Seasonality creates false alarms unless I account for repeating patterns. Many organizations also run multiple models and versions across the funnel, which makes it harder to understand what changed and when.

Finally, drift can be confused with silent pipeline failures: a field stops updating, an integration partially breaks, or a job runs with stale data. The model still produces outputs, but the inputs are no longer what I think they are. This is exactly the kind of scenario covered in data layer specification writing and validation with LLMs-because monitoring only works if the inputs are real.

When I reduce risk, I tend to rely on a few principles: I compare new model versions against a stable reference set to catch regressions; I introduce changes gradually so I can observe impact before committing; I keep basic records of drift incidents (what changed, what I did, what happened); and I make ownership explicit so decisions to retrain, pause, or roll back don’t stall.

Putting Concept Drift Management Into Practice

I don’t need a massive transformation to get value; a structured rollout over a few months is often enough to move from “I assume the model is fine” to “I can see how it ages.”

- Instrument and baseline: I start by confirming which models actually influence core workflows (lead scoring, forecasting, routing, risk decisions). Then I ensure predictions are logged with identifiers that connect to later outcomes. Finally, I build a baseline view of model metrics and business KPIs over time, using a stable historical window.

- Add drift signals and thresholds: Next, I add checks that watch feature movement, score movement, and-where labels are timely-performance changes. I set initial thresholds and refine them based on how noisy they are in my environment, because an alert that fires constantly is functionally the same as no alert at all.

- Connect monitoring to action: Last, I define what happens when signals fire: when I investigate data quality, when I retrain, and when I hold off to avoid reacting to a blip. I also measure the lag from a real business change (like a pricing update) to the moment I see its impact in monitoring, because that lag is part of my operational risk.

When lead scores or forecasts feel “off,” when I’ve changed pricing/ICP/stages without revisiting models, or when manual overrides keep creeping up, I treat it as a reason to look for drift-not as a reason to debate whether “the team is following the process.” Concept drift is often the invisible variable turning good systems into expensive ones, and I’m better off making it visible than living with surprises.

.svg)